Picking the right real estate data provider can directly impact your success. Accurate, timely, and well-integrated data is essential for deal sourcing, marketing, and compliance. A poor choice can lead to outdated insights, legal risks, and missed opportunities. Here’s what to focus on:

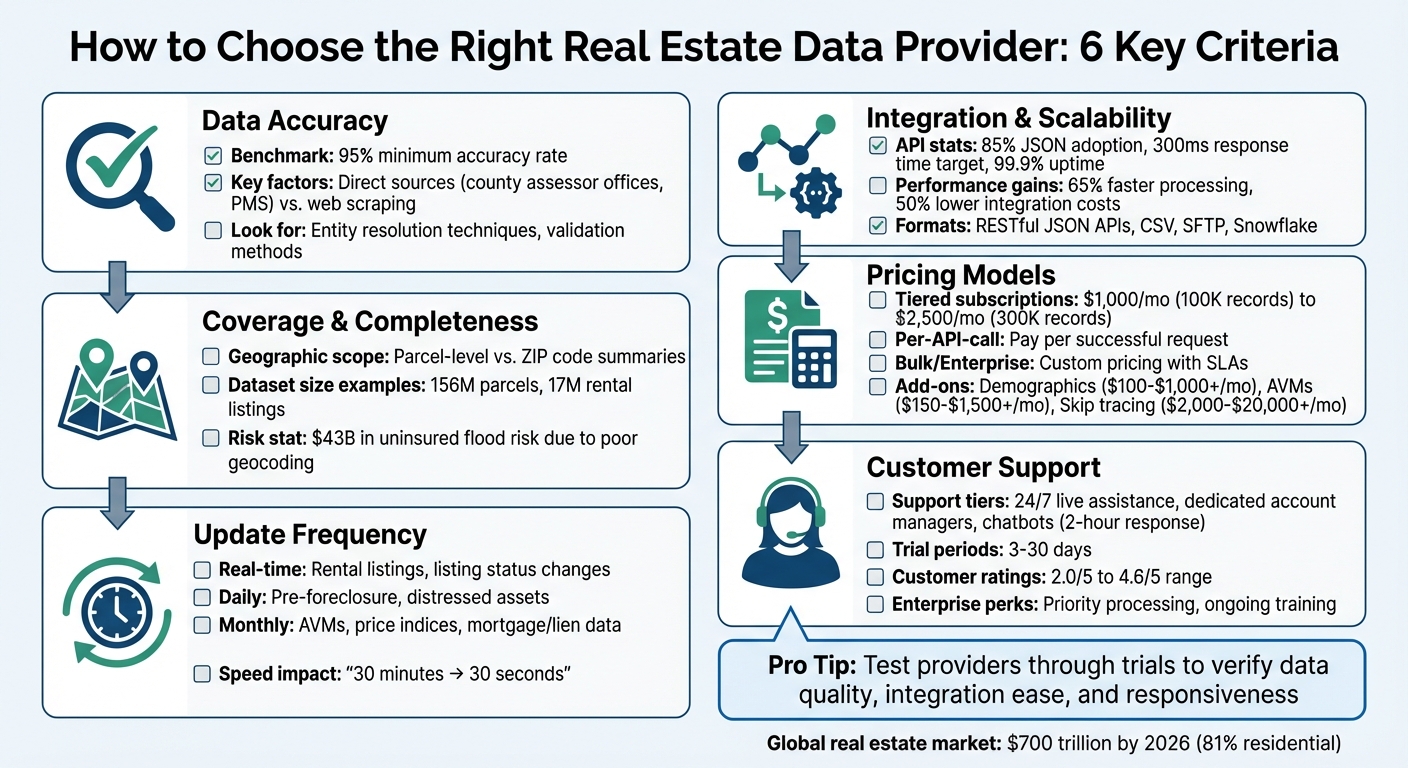

- Accuracy: Look for providers with at least 95% accuracy and reliable data sources.

- Coverage: Ensure their geographic and property type coverage aligns with your market needs.

- Update Frequency: Match update schedules (daily, monthly, real-time) to your business model.

- Integration: Check for real estate API support, scalability, and compatibility with your systems.

- Pricing: Compare subscription, per-call, or bulk licensing models based on your usage.

- Support: Evaluate customer service options and onboarding resources.

Pro Tip: Test providers through trials or demos to verify data quality, integration ease, and responsiveness.

Keep reading to explore these factors in detail and learn how to align a provider’s features with your business goals.

Real Estate Data Provider Selection Criteria Comparison Chart

Real Estate Data Providers in 2026 How to Evaluate Coverage, Refresh, and Licensing Fit

sbb-itb-8058745

1. Check Data Accuracy and Source Reliability

The foundation of reliable real estate data lies in its origin and how it’s handled. Top-tier providers maintain an accuracy rate of at least 95%, which should be your benchmark. Anything lower could lead to flawed insights and poor decision-making.

"Bad data is worse than no data because it actively leads to wrong decisions, like underwriting a deal on an outdated valuation." – BatchData

Let’s break down how data accuracy is achieved, starting with sourcing and cleaning processes.

How Providers Source Their Data

The most dependable data comes from direct sources like county assessor offices and Property Management Systems. These sources generally offer cleaner and more reliable information compared to web scraping. Scraping often introduces issues like duplicate entries, formatting errors, and even compliance risks. When assessing providers, ask how they source their data – do they rely on primary sources, or do they depend on third-party aggregators?

Leading providers go a step further by using entity resolution techniques. This process consolidates property and ownership records from various sources, revealing insights such as the actual owner behind an LLC and delivering a complete view of the asset. Make sure to inquire about their validation methods and how they address inconsistencies across data sources.

Once data is sourced, the next step is ensuring it’s standardized and free of errors.

Data Cleaning and Standardization

Data normalization is essential for making information consistent across multiple sources. This includes standardizing elements like street abbreviations and ZIP codes to ensure your database aligns seamlessly across different regions. Without this step, inefficiencies and mismatches are almost inevitable.

It’s also critical to understand how providers handle errors. Public records can contain inaccuracies, and the best vendors have formal processes to identify and correct these mistakes. Beyond basic corrections, premium providers enhance their datasets with additional insights, such as owner contact information, mortgage histories, liens, and valuation models. This level of enrichment eliminates the need for you to spend extra time cleaning raw data, giving you a more complete and actionable asset profile right from the start.

2. Review Coverage, Completeness, and Historical Data

Once you’ve confirmed the data’s accuracy, the next step is to evaluate whether the provider’s coverage, level of detail, and historical records match your specific market needs.

Geographic Coverage and Property Types

Check if the provider delivers the level of detail you require – whether it’s parcel-level data for individual property underwriting or ZIP code summaries for broader market insights.

The type of properties covered is just as critical. For instance, single-family rentals and build-to-rent communities demand unit-level specifics, while institutional commercial investors need access to detailed transaction histories and ownership data. Top-tier providers often manage vast datasets, such as 156 million parcels sourced from county tax assessors or 17 million unit-level rental listings.

For precise risk assessments, choose providers that use building-based geocoding methods. These are far more accurate than parcel-centroid or street-based approaches. Poor geocoding has already led to an estimated $43 billion in uninsured flood risk across the U.S..

Field Completeness and Historical Records

Take a close look at the provider’s data dictionary to ensure critical fields – like square footage and year built – are complete and consistent. Also, confirm that historical records are maintained. For example, having access to rental listings from 2020 or transaction data from the early 2000s can be invaluable for identifying trends and making forecasts. Some platforms even offer 36-month forward-looking projections based on property-level history, which can strengthen predictive models.

Another key feature is robust entity resolution, which links properties to their actual owners, even in cases involving complex structures like shell LLCs or trusts. This is essential for assessing portfolio exposure and pinpointing decision-makers behind intricate ownership arrangements.

3. Verify Update Frequency and Data Freshness

Having timely data at your fingertips can be the difference between staying ahead of competitors or losing out on opportunities. In real estate, timing is everything. A profitable deal can slip through your fingers if you’re not quick to act on new information. As BatchData puts it, "In today’s competitive real estate landscape, speed, precision, and relevance can make or break an investment strategy."

The frequency at which you need updated data depends heavily on your business model. For example, rental listings demand real-time updates that sync instantly with Property Management Systems via a real estate API, ensuring occupancy changes are reflected immediately. On the other hand, workflows focused on distressed assets typically require daily updates, with some providers offering visibility into foreclosure and pre-foreclosure filings within 24 hours in active markets. Meanwhile, tools like Automated Valuation Models (AVMs) and residential price indices are typically refreshed monthly, which works well for monitoring portfolios and analyzing broader market trends.

Access to real-time data can significantly impact productivity and outcomes. Chris Finck, Director of Product Management, highlighted this advantage, stating, "What used to take 30 minutes now takes 30 seconds. BatchData makes our platform superhuman." The ability to move from research to execution in mere minutes can help you secure deals that others might miss. To maximize this advantage, it’s crucial to identify which data fields require immediate and frequent updates.

Fields That Need Frequent Updates

Certain data fields demand more frequent updates to stay actionable and relevant:

- Pre-foreclosure data: Includes Notice of Default filings, auction dates, and trustee contact details. These need daily updates to remain useful.

- Listing data: Elements like price changes, Days on Market (DOM), and status changes (e.g., Active to Pending) should be updated in real time for precise market insights.

- Permit information: Details such as new job values, issue dates, and contractor information generally update weekly or bi-weekly.

- Mortgage and lien data: Fields like open lien counts and unpaid balances typically require monthly updates, unless you’re targeting distressed assets.

When evaluating a data provider, always confirm their update schedules for these critical fields. Additionally, compare sample data against public records to ensure accuracy. For areas with slower county reporting, check whether the provider uses historical listing data to fill in missing details – such as bedroom or bathroom counts. This multi-sourced approach can help avoid gaps that might compromise your analysis.

4. Test Integration Capabilities and Scalability

Integration and scalability are just as important as data accuracy and coverage when it comes to ensuring smooth operations. Even the most accurate data won’t help if it can’t integrate seamlessly with your systems. Before committing to a provider, make sure their solutions work effortlessly with your existing tools and are prepared to handle your future growth. This connection between data quality and operational efficiency is essential. According to industry insights, businesses using scalable APIs experience 65% faster data processing and 50% lower integration costs compared to manual exports (Deloitte Real Estate Tech Report, 2024).

API Support and Data Delivery Formats

Your provider should offer RESTful JSON APIs for real-time integration with platforms like CRM systems, analytics tools, or custom applications. JSON dominates in proptech, with 85% adoption, compared to just 10% for XML formats (PropTech API Benchmark, 2025). This preference for JSON can significantly speed up development, with some providers reporting up to 40% faster implementations over outdated formats.

It’s also critical to have flexible delivery options, like CSV for bulk imports or SFTP for large-scale transfers, to avoid API rate limits. During your evaluation, request sandbox API keys for testing with tools such as Postman. Test response times to ensure they stay under 300 milliseconds, and confirm that the data formats meet your technical needs – like imperial measurements (square feet), pricing in USD, and MM/DD/YYYY date formats.

Scalability for Growing Businesses

Scalability is about ensuring your provider can grow alongside your business. While your initial data needs might be modest – say, 1,000 monthly queries – those needs can skyrocket as you expand into new markets. Scalable solutions maintain high performance even as demand increases, offering 99.9% API uptime and the ability to handle surging data volumes without interruptions.

To evaluate scalability, simulate heavy loads (e.g., five times your current volume) using tools like Apache JMeter. Examine the provider’s service level agreements (SLAs) for guaranteed throughput, and look for case studies showing how they’ve supported businesses from small startups to enterprise-level portfolios. Ask targeted questions, such as whether they can process 500 million records quarterly or if they offer additional features like AVMs, flood risk data, or distressed asset insights without requiring a platform migration. Providers that offer tiered pricing and dedicated API specialists can often cut setup times by up to 50%.

5. Compare Pricing Models and Customer Support

Finding the right pricing model depends on your data needs and budget. Providers typically offer options like tiered subscriptions, per-API-call pricing, or bulk licensing. Tiered subscriptions are great for businesses with consistent monthly data usage – examples include $1,000/month for 100K records or $2,500/month for 300K records. If your data needs are unpredictable or low-volume, a per-API-call model might be better since you only pay for successful requests. For businesses managing massive datasets – like those used in training machine learning models or large-scale market analysis – bulk or enterprise licensing is the way to go. These often come with custom pricing and SLA guarantees.

Some providers also offer modular add-ons for specialized datasets. For example, demographics data might cost $100–$1,000+ per month, property valuation AVMs could range from $150–$1,500+/month, and contact enrichment services might set you back $500–$10,000+/month. Skip tracing services, on the other hand, often use pay-per-match pricing or tiered plans, which can range from $2,000 to over $20,000 per month. To avoid overspending, ensure your plan matches your actual data usage – for instance, if you process 80,000 records monthly, a 100,000-record plan is far more economical than one capped at 20,000 or one offering 300,000 records.

Common Pricing Structures

The delivery method you choose can also impact costs. Here’s how different pricing structures align with common workflows:

- API-based pricing: Ideal for real-time enrichment and live applications, billed per-call or through tiered plans.

- S3 bulk file delivery: Best for training machine learning models or populating data lakes, with charges based on file size or subscriptions.

- Snowflake Share: Perfect for BI analytics and enterprise querying, combining subscription costs with compute usage.

- Flat file (FTP) delivery: Useful for legacy systems or offline analysis, often priced as a subscription or one-time fee.

If you need real-time updates, APIs are a solid choice to avoid high storage costs. For deep, market-wide analysis, bulk delivery can help manage compute expenses more effectively.

Customer Support and Expertise

The quality of customer support can significantly influence your experience, especially during setup and troubleshooting. Higher-tier plans often include dedicated account managers and priority processing, which are invaluable for large-scale operations. Enterprise customers usually get access to dedicated customer success representatives who provide ongoing training and handle technical issues, while lower-tier plans may rely more heavily on documentation or basic support channels.

Before committing, take advantage of free trials (usually 3 to 30 days) to evaluate both the data quality and the responsiveness of the support team. Platforms like Trustpilot can offer insights into customer service quality, with ratings for top providers ranging from 2.0/5 to 4.6/5. Ensure the provider’s support team can resolve technical issues quickly – ideally within hours, not days – to avoid costly delays.

Look for providers that offer multiple support options, such as 24/7 live assistance, chatbots with guaranteed response times (e.g., within 2 hours), and comprehensive support portals. Structured onboarding, e-learning resources, webinars, and detailed technical documentation can also shorten your learning curve and help you achieve value faster. Make sure the level of support aligns with your business’s technical complexity and operational scale.

Next, consider how these pricing models and support features align with your specific business needs.

6. Define Your Business Requirements

Start by identifying the top 3–5 use cases your business needs to address. For instance, do you need fast loan underwriting, tools for distressed asset identification, or automated alerts for tax or lien issues? Each of these scenarios requires specific types of data and delivery methods to be effective.

To organize and prioritize these needs, consider using a framework like MoSCoW. For example, a lender focused on underwriting and assessing risk might prioritize access to AVMs (automated valuation models), lien history, and mortgage records delivered via a real-time API. On the other hand, an investor interested in finding motivated sellers might need tools like pre-foreclosure flags, tax delinquency data, and skip tracing capabilities. Defining these use cases upfront ensures that your choice of provider aligns with your business goals.

Match Provider Features to Your Use Cases

Different business objectives require tailored data features and delivery options. Here are a few examples:

- Targeted marketing and lead generation: Look for providers offering highly specific filters, such as homes with over 50% equity or filtering by age ranges. This can help reduce acquisition costs. Additionally, ensure they provide DNC-scrubbed contact information and skip tracing tools that integrate easily with your CRM via API or CSV export.

- Commercial real estate prospecting: If you’re in this space, you’ll need tools for entity resolution to identify property owners behind shell companies and map out complete ownership portfolios. Institutional researchers may also require data like unit-level rent trends, absorption rates, and supply pipeline information, ideally delivered in bulk to platforms like Snowflake or Amazon S3.

- Property management: For operational efficiency, focus on features like tenant screening, maintenance history tracking, and automated market reports. These tools can help reduce operational costs by up to 35%.

With the global real estate market projected to be valued at nearly $700 trillion by 2026, and residential properties making up 81% of that value, aligning your provider’s capabilities with your specific workflows is crucial. This alignment can mean the difference between gaining a competitive edge and wasting resources on features that don’t meet your needs.

Before committing to a provider, request a trial or demo. Use this opportunity to evaluate metrics like fill rates (how complete the data is) and match rates (how well the data meets your specific requests). Once your requirements are clearly defined and matched to the right features, you’ll be ready to move forward with a focused and informed provider selection process.

Conclusion

Choosing the right real estate data provider takes a thoughtful approach, focusing on accuracy, integration, pricing, and features that fit your business needs.

Accuracy is a top priority – poor data quality can lead to bad decisions and missed opportunities. But accuracy alone isn’t enough. The data must integrate smoothly into your systems to transform operations. Whether you prefer API access or bulk delivery, the delivery method should align with your technical setup and help turn data into actionable insights.

When it comes to pricing, options range from per-API-call models to bulk enterprise licenses. Don’t just look at upfront costs – factor in hidden expenses like manual data cleaning, which can inflate your budget over time. The pricing model should match how you plan to use the data.

Lastly, the provider’s features must align with your goals. With the real estate market projected to hit nearly $700 trillion by 2026, and residential properties making up 81% of that value, having the right data partner can mean the difference between staying ahead or falling behind. Request trials to test data quality, relevance, and integration. Look for providers that offer consultative support to help you turn raw data into actionable strategies that drive revenue. For those looking to automate these workflows, consider integrating APIs with low-code tools to streamline lead generation.

FAQs

What should I ask a provider to prove their data is really accurate?

To ensure the data you’re using is accurate, start by asking the provider about their validation methods. Reliable practices often include:

- Automated rule-based checks to catch errors quickly.

- Manual inspections for a closer review of the data.

- Cross-referencing with trusted sources like county records or MLS databases.

You can also ask if they use statistical sampling or have tools designed to flag discrepancies efficiently. These measures help confirm the data meets your business standards and can be trusted for your specific needs.

How do I know if a provider’s coverage and history fit my market and use case?

To determine if a provider’s coverage and history align with your market and use case, take a close look at a few key factors. Start by examining their geographic and property type coverage – do they cater to your specific region and the property segments you’re focused on? Next, consider how often they update their data and the reliability of their sources. Top-notch data should pull from a mix of public records, brokerage insights, and proprietary databases that are relevant to your area and property needs.

It’s also critical to evaluate their track record. Check for accuracy, timeliness, and consistency in the data they’ve provided in the past. This will give you confidence that they can meet the demands of your operations without any hiccups.

What’s the best way to test API integration, speed, and scalability before buying?

To ensure an API fits your business needs, start by using trial access or sandbox environments. These tools let you simulate real-world scenarios and test how well the API integrates with your systems. Pay close attention to response times, request limits, and how it handles bulk processing.

It’s also a good idea to perform load testing to see how the API performs under heavy demand. This can help you gauge its scalability and reliability. Don’t forget to connect with the provider’s support team for any technical questions and to validate its functionality. Finally, review their Service Level Agreements (SLAs) to confirm the API aligns with your expectations and requirements.