Scalable real estate APIs are essential for handling vast property datasets efficiently, enabling real-time access to market trends, ownership details, and more. The key to building these APIs lies in effective scaling strategies, robust architecture, and performance optimization. Here’s what you need to know:

- Horizontal Scaling: Expanding capacity by adding more servers, often using microservices for targeted scaling.

- Load Balancing: Distributing traffic across servers to prevent overloads, paired with auto-scaling for demand surges.

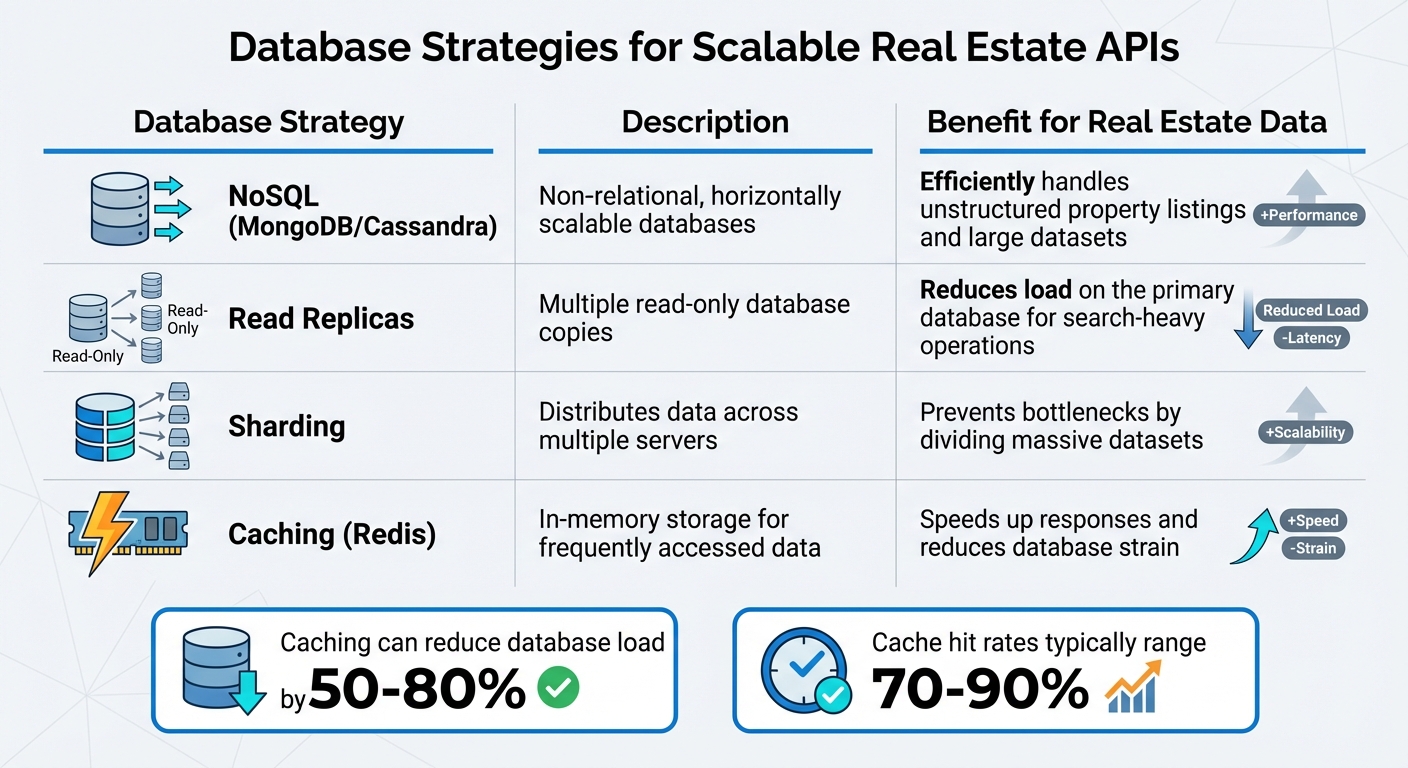

- Database Optimization: Using NoSQL databases, read replicas, sharding, and caching to handle large datasets and reduce latency.

- Performance Boosts: Techniques like caching, payload compression, and rate limiting improve speed and reliability.

- Security Measures: OAuth 2.0, TLS encryption, and compliance with RESO standards ensure data safety and compatibility.

- Monitoring and Testing: Real-time tracking, distributed tracing, and automated testing ensure reliability under varying conditions.

Platforms like BatchData showcase how scalable APIs can manage millions of property records with low latency, offering tools for bulk data delivery, real-time property insights, and tailored professional services. By focusing on efficient design and strong security, real estate APIs can handle high traffic and deliver actionable data seamlessly.

REST API: Designing Scalable Web Services (System Design for Beginners – Episode 24)

Core Principles of API Scalability

Database Strategies for Scalable Real Estate APIs: NoSQL, Sharding, Caching & Read Replicas

Building scalable real estate APIs hinges on three main ideas: horizontal scaling, load balancing, and well-structured databases. These principles are what keep your system running smoothly, even during sudden traffic spikes – like when a trending property listing attracts massive attention. As Zuplo puts it:

When traffic to your API doubles overnight, will your system celebrate or collapse?

Horizontal Scaling with Microservices

Horizontal scaling involves expanding your system by adding more servers instead of upgrading a single machine. This setup allows for nearly limitless growth. The secret lies in using a stateless architecture, where every API request carries all the necessary data, enabling any server in the cluster to handle it without hiccups.

Microservices take this a step further. Rather than scaling an entire application, you can focus on individual components based on demand. For instance, during peak house-hunting months, you could increase resources for your property search service while leaving other services, like user management, unchanged. This targeted approach avoids system-wide failures during high-demand periods.

Take Xome’s Property Insights API as an example. After moving to Microsoft Azure and using a containerized setup through Azure API Management, their system now manages up to 1 million daily requests without breaking a sweat. By allocating resources at the component level, heavy processes like image processing or sales analysis don’t hog resources needed for other critical functions.

From here, load balancing and auto-scaling step in to ensure these extra resources work seamlessly together.

Load Balancing and Auto-Scaling

Load balancing spreads incoming requests across multiple servers, preventing any one machine from getting overwhelmed. When paired with auto-scaling – which adjusts computing power based on actual demand – these tools help maintain smooth performance, whether you’re handling a steady flow of 100,000 requests an hour or dealing with an unexpected traffic surge.

There are several load-balancing methods to consider:

- Round-robin: Assigns requests sequentially to servers with identical setups.

- Least connections: Routes traffic to the server with the most capacity left.

- Geo-based routing: Connects users to the server closest to their physical location, cutting down latency.

Since cloud resources are often over-provisioned by 30%–45%, effective load balancing is not just about performance – it’s also a smart cost-control measure. For predictable traffic levels, using reserved instances can slice costs by as much as 70% compared to on-demand pricing. Regular health checks on load balancers also ensure traffic only goes to servers that are fully operational, allowing faulty instances to recover or be replaced without impacting users.

Database Design for Real Estate Data

Once your computing resources are scaling smoothly, the next challenge lies in optimizing your database design. The database is often the first point of failure when traffic scales up. NoSQL databases like MongoDB or Cassandra are well-suited for real estate APIs because they handle unstructured data and large datasets with ease, thanks to their horizontal scalability.

For read-heavy tasks – such as millions of property searches – read replicas can take the pressure off your primary database. By directing all "SELECT" queries to these replicas, you reserve the main database for critical write operations like adding new listings or updating property statuses. Sharding, which divides data by keys like ZIP codes or regions, further ensures that no single database becomes a bottleneck.

Caching layers, using tools like Redis or Memcached, can make a huge difference. By storing frequently accessed property details – like school ratings or tax histories – in memory, you can deliver responses in milliseconds while reducing database load by 50%–80%. Caching hit rates often range between 70% and 90%, making it an indispensable strategy for improving performance.

| Database Strategy | Description | Benefit for Real Estate Data |

|---|---|---|

| NoSQL (MongoDB/Cassandra) | Non-relational, horizontally scalable databases | Efficiently handles unstructured property listings and large datasets |

| Read Replicas | Multiple read-only database copies | Reduces load on the primary database for search-heavy operations |

| Sharding | Distributes data across multiple servers | Prevents bottlenecks by dividing massive datasets |

| Caching (Redis) | In-memory storage for frequently accessed data | Speeds up responses and reduces database strain |

Architecture Best Practices for Scalable APIs

When it comes to building scalable real estate APIs, the architecture you choose plays a huge role in managing growth, adapting to demand, and maintaining reliability under pressure. Let’s dive into some key approaches.

Microservices Architecture

Breaking your API into smaller, independent services allows each one to handle a specific function. This means you can scale or update parts of your system without affecting the rest. For example, during the busy house-hunting season, you might need to allocate extra resources to your property search service while leaving other services, like a mortgage calculator, untouched.

Another major perk? Fault isolation. If one service fails, like the mortgage calculator, it won’t take down the entire platform. Plus, updates can be deployed to individual services without needing to rebuild the whole system. Microsoft Azure explains it well:

Microservices are small, independent, and loosely coupled components that a single small team of developers can write and maintain. (Microsoft Azure)

A practical example of this is containerized microservices successfully managing up to 1 million requests daily.

For microservices to work seamlessly, it helps to organize them around business domains using Domain-Driven Design (DDD). This involves identifying "Bounded Contexts" – distinct areas like Listings, Users, or Payments. Each service should manage its own data and schema to avoid creating dependencies that make scaling harder. An API gateway can handle shared tasks like authentication and rate limiting, letting each service stay focused on its primary purpose.

Once your microservices are in place, pairing them with a solid cloud infrastructure takes scalability even further.

Cloud Infrastructure

Cloud platforms like AWS and Google Cloud give you the tools to scale your API without worrying about physical servers. Managed services such as Amazon Aurora Serverless v2 or Google Cloud SQL take care of redundancy and backups across multiple zones, so your team can focus on building features instead of infrastructure.

Choosing the right compute option is important. For tasks that occur sporadically – like processing property images or refreshing listings – serverless functions (think AWS Lambda or Cloud Run) are a great fit. On the other hand, for APIs with steady, high traffic, container orchestration solutions like AWS Fargate or Google Kubernetes Engine avoid the delays caused by serverless "cold starts."

To streamline infrastructure management, tools like Terraform or AWS CDK let you automate resource provisioning and maintain consistency across environments. Event-driven architectures using tools like Amazon SQS or EventBridge further decouple your system, ensuring that one service’s failure doesn’t crash everything else.

Cost efficiency is another crucial factor. It’s common for cloud resources to be over-provisioned by 30%–45%. To cut costs, use VPC Endpoints to keep traffic within your private network, avoiding expensive NAT Gateway fees. For APIs handling heavy traffic, Application Load Balancers can save you money – they’re up to 10 times cheaper than Amazon API Gateway, which typically costs $1.00–$3.50 per million requests.

Containerization with Docker and Kubernetes

To build on the flexibility of cloud infrastructure, containerization with tools like Docker and Kubernetes ensures rapid and consistent scalability. Containers bundle your API with all its dependencies, making deployments seamless across different environments. This speeds up the deployment process significantly.

Kubernetes takes care of the heavy lifting when it comes to managing these containers. It can automatically scale resources to handle traffic spikes, which is essential for real estate platforms that might see unpredictable surges in searches. For example, containerized setups have been shown to handle traffic ranging from 100,000 requests per hour to 1 million requests per day.

One of the biggest benefits of containerization is resource isolation. It ensures that demanding tasks like processing high-resolution images don’t hog resources needed by other services. Containers also start up in seconds, much faster than traditional virtual machines, making them ideal for situations that require quick scaling.

Using managed Kubernetes services like Amazon EKS, Google Kubernetes Engine, or Azure Kubernetes Service can reduce the time and effort needed to maintain your infrastructure. By configuring Horizontal Pod Autoscaling (HPA), you can automatically adjust the number of running pods based on resource usage, ensuring your system keeps up with demand. On top of that, adding container-level security with detailed permissions helps protect your services from potential threats.

Performance Optimization Strategies

Once your architecture is set up, the next challenge is making sure your API can handle traffic spikes without breaking a sweat. Real estate platforms often face unpredictable surges – like during open house weekends or big market updates – so your system needs to stay responsive under pressure. Smart optimization techniques can shrink response times from seconds to milliseconds and reduce database load by over 90%.

Caching for Property Queries

One of the fastest ways to boost API performance? Avoid hitting your database altogether. Caching stores frequently accessed property data in memory, allowing repeated requests to get near-instant responses. This approach not only cuts database load by over 90% but also delivers responses in milliseconds. As Easyparser puts it:

The fastest API call is the one you never have to make. The second fastest is the one that hits a cache.

A multi-layer caching setup is ideal for real estate APIs. Start with browser caching for static assets and user-specific data. Then, use CDN caching at edge servers for public images and API responses. For frequently accessed "hot" data, in-memory application-level caching works best. Finally, distributed caching solutions like Redis or Memcached can handle session data and rate limiting across multiple servers.

Not all property data updates at the same pace, so tailor your cache TTLs (time-to-live) accordingly. Static details like square footage or year built can be cached for weeks or even months, while active listings and prices might need shorter TTLs – around 5–15 minutes – to stay accurate. Dynamic TTLs can further improve response times while keeping data fresh.

HTTP caching standards, like Cache-Control headers and ETags, let clients reuse data without hitting your servers unnecessarily. ETags, for example, act as version fingerprints for resources. If the data hasn’t changed, the server can return a 304 Not Modified response, saving bandwidth. Instead of hashing entire datasets, use metadata like the updated_at timestamp to generate ETags and reduce processing overhead.

Breaking large property objects into smaller, independent resources can also improve cache efficiency. For instance, separate property attributes from payment history or owner data. This way, stable information gets more cache hits, while dynamic data refreshes independently. High cache hit rates are key to keeping performance strong.

For even faster responses, you can use the stale-while-revalidate pattern. This method serves cached data immediately while refreshing the cache in the background, ensuring zero-latency responses for high-demand property searches.

With caching in place, the next step is minimizing payload size to further reduce latency.

Payload Optimization

Efficient payloads are just as important as caching for speeding up your API. Trimming down large data payloads can boost throughput by up to 30% for scalable real estate platforms. A simple yet effective method is field-level filtering – let clients request only the fields they need, like address and price, instead of sending the entire property object. This can reduce payload size by 60% while preserving essential data.

Homesage.ai, for example, uses a tiered API with lightweight endpoints to filter properties before pulling detailed reports. This approach saves both time and API costs.

Data compression is another game-changer. Using Gzip can shrink JSON response sizes by 50%–80%, while Brotli achieves 70%–90% compression and is 17%–25% more efficient than Gzip for text-based content. Netflix saw a 50% boost in API success rates after introducing EVCache, a distributed caching solution that reduced backend load and improved response times.

For internal communications, binary formats like Protocol Buffers or Apache Avro can reduce payload sizes by up to 10× and speed up parsing.

Keep JSON structures simple – limit nesting to three or four levels. This reduces parsing complexity and can cut processing bottlenecks by up to 30%. Instead of embedding full objects, use reference IDs. For example, link to a user by their ID rather than including all their details in every property comment. This alone can shrink data volume by about 25%.

Optimizing payloads ensures your API stays fast and efficient, even during traffic surges.

Rate Limiting and Throttling

After fine-tuning data retrieval and transmission, it’s crucial to protect your API from being overwhelmed. Rate limiting acts as a safeguard against traffic spikes, whether caused by malicious attacks or client-side bugs. It prevents resource exhaustion and keeps performance consistent for all users. As Moesif explains:

API rate limiting is critical for maintaining system stability and performance, preventing overuse and protecting against DoS attacks.

Rate limiting rejects excessive requests with a 429 Too Many Requests status, while throttling queues them to maintain stability. Together, these strategies can boost API success rates by up to 50%. For example, Twitter allows 900 requests per 15 minutes on certain endpoints, while GitHub caps usage at 5,000 requests per hour per user access token.

When a client exceeds their limit, include a Retry-After header in your 429 response to specify how long they should wait before retrying. Use headers like X-RateLimit-Limit, X-RateLimit-Remaining, and X-RateLimit-Reset to give clients real-time visibility into their usage.

Instead of limiting by IP address – which can be shared by multiple users – use User ID or API Key for better precision. For distributed systems, a centralized store like Redis can synchronize request counts across multiple servers.

| Algorithm | Best For | Pros | Cons |

|---|---|---|---|

| Fixed Window | Simple cases | Easy to implement; low memory | Spikes at boundaries |

| Sliding Window | Precision | Prevents boundary abuse | Higher memory usage |

| Token Bucket | Bursty traffic | Allows short-term flexibility | Complex synchronization |

| Leaky Bucket | Steady flow | Smooths traffic rates | Potential delays for valid requests |

The Token Bucket algorithm is particularly effective for real estate APIs because it allows short-term bursts – like when users quickly scroll through listings – while maintaining overall stability. Encourage clients to implement retry logic with exponential backoff (e.g., 1s, 2s, 4s) to avoid "thundering herd" issues when multiple clients retry simultaneously.

sbb-itb-8058745

Security and Compliance Requirements

Once performance is optimized, the next step is to secure your API and ensure it meets regulatory standards. Real estate APIs manage sensitive data like financial records, personal information, and proprietary listings, making them attractive targets for threats such as phishing, SQL injection, and IoT vulnerabilities. As Angelika Agapow from Hicron Software puts it:

Security and responsiveness aren’t just technical features – they’re foundational pillars that define the success of any cloud‐based property management platform.

A strong security framework not only protects users but also ensures compliance with regulations like GDPR and CCPA, which impose strict rules on data handling.

Authentication and Authorization

Securing your API starts with controlling access and defining user permissions. OAuth 2.0 is now the standard for real estate APIs, replacing older methods like the RETS login system, which dates back over two decades. Scott Lockhart, CEO of Showcase IDX, highlights this shift:

The tokenized authentication (OAuth) is much more secure than the standard login in RETS. Moving to the Web API is the future.

However, not all OAuth implementations are equally secure. As of January 2025, RFC 9700 officially deprecated several OAuth 2.0 modes due to vulnerabilities. For example, the Resource Owner Password Credentials grant exposes user credentials to the client and should never be used. Similarly, the Implicit Grant is prone to token leakage and replay attacks. The recommended approach is to use the Authorization Code Grant with PKCE (Proof Key for Code Exchange). Public clients, such as mobile apps or single-page applications, should implement PKCE with the S256 challenge method to prevent authorization code injection and CSRF attacks. Even confidential clients are encouraged to adopt PKCE for additional security.

For authorization, Role-Based Access Control (RBAC) is essential. It ensures users only access data they’re authorized to see. For example, a property manager might access tenant records, while an analytics partner is restricted to aggregated market data [29,30].

To further enhance security, consider the following practices:

- Use exact string matching for redirection URIs to prevent token/code leakage.

- Enforce audience restrictions using the

audclaim in tokens. - Rotate refresh tokens for public clients to mitigate the impact of stolen tokens.

- Implement asymmetric client authentication methods like Private Key JWT or Mutual TLS.

| Security Mechanism | Requirement Level | Purpose |

|---|---|---|

| PKCE (RFC 7636) | MUST (Public) / RECOMMENDED (Confidential) | Prevents code injection and CSRF |

| Exact Redirect Matching | MUST | Prevents token/code leakage |

| Refresh Token Rotation | MUST (for Public Clients) | Mitigates impact of stolen refresh tokens |

| Audience Restriction | SHOULD | Limits token validity to intended resources |

| Asymmetric Client Authentication | RECOMMENDED | Enhances protection against key leakage |

Additionally, use OAuth Authorization Server Metadata (RFC 8414) to simplify the configuration of security features and enable key rotation.

While securing user access is critical, protecting the data itself – both in transit and at rest – is equally important.

Data Encryption and HTTPS

Encryption is a cornerstone of data protection. HTTPS must be enforced for all API endpoints to ensure that authorization responses and data transmissions are not exposed over unencrypted channels. Using Transport Layer Security (TLS) encrypts communications between API servers and clients, safeguarding against man-in-the-middle attacks [29,32,34].

For data stored in databases, the Advanced Encryption Standard (AES) is the go-to method for securing sensitive information. Both AES and TLS not only protect data but also align with GDPR and CCPA requirements [29,32].

To maintain system stability during traffic spikes, apply per-user quotas and adaptive throttling. Isolating API traffic from the core MLS database can further enhance reliability.

With encryption protocols in place, aligning with industry standards provides an additional layer of security.

RESO Standards Compliance

The Real Estate Standards Organization (RESO) establishes guidelines for secure and interoperable data exchange within the real estate sector. Following RESO standards – such as the RESO Web API Core 2.0.0 and Data Dictionary 2.0 or 2.1 – ensures smooth integration with MLS platforms, property management systems, and third-party tools. The industry is moving away from the legacy RETS protocol in favor of the modern RESO Web API, which uses RESTful principles and JSON formats [31,37].

As Roman Romanenko, Senior Software Engineer, explains:

Because the RESO Data Dictionary is relatively new – first released in 2011 – most MLS systems were already operating long before it existed… developing a conversion layer for the RESO Web API offers immediate compliance and business value.

Legacy systems often require a conversion layer to map non-standard fields (e.g., "Available" vs. "Active") to RESO-approved terminology. Furthermore, the RESO Web API Security Standard mandates OAuth 2.0, emphasizing the need for tokenized access, typically implemented via OAuth2 Client Credentials [35,38].

Adhering to RESO standards not only ensures compatibility but also strengthens the API’s framework.

| Compliance/Security Measure | Technical Implementation | Business Benefit |

|---|---|---|

| Authentication | OAuth2 / Multi-Factor Authentication | Prevents unauthorized access and identity theft |

| Data Standards | RESO Data Dictionary Mapping | Ensures compatibility across MLS platforms |

| Traffic Control | Quotas and Exponential Backoff | Maintains system stability during high demand |

| Data Protection | AES Encryption and TLS | Meets GDPR and CCPA requirements |

| Payload Design | Selective/Tiered Payloads | Reduces bandwidth costs and improves performance |

To stay compliant, monitor RESO updates and incorporate certification testing into your development cycle. This proactive approach reduces the risk of integration issues and technical debt.

With robust security and compliance measures in place, you’re ready to implement monitoring and testing protocols to ensure your API performs reliably under various conditions.

Monitoring, Testing, and Integration

Even the most secure API can falter without consistent monitoring, thorough testing, and seamless integration with third-party services. APIs now handle about 83% of all web traffic, yet one in five companies has faced a serious API-related outage in the past three years. For real estate platforms managing property searches, skip tracing, or transaction data, downtime can mean lost leads and revenue. These measures help ensure your API can handle growing user demand without missing a beat.

Real-Time Monitoring Tools

Monitoring isn’t just about checking uptime. To truly understand performance, you need to track the "Golden Signals": Availability (success rate), Latency (response time), Errors (4xx/5xx status codes), Saturation (resource usage), and Correctness (accuracy of returned data). A "200 OK" response doesn’t mean much if critical fields like price or status are missing.

Geographic monitoring is also crucial. By tracking performance across regions, you can spot and fix location-specific issues before users notice. For example, your API might perform well in New York but show a 500ms latency in California – distributed monitoring helps uncover these gaps. Keep an eye on P99 latency, which reflects the experience of your most demanding users. If P99 latency exceeds 500ms, conversion rates can drop immediately.

Tools like OpenTelemetry, Jaeger, or Zipkin allow you to pinpoint delays quickly through distributed tracing. Martin Norato Auer, VP of CX Observability Services at SAP, highlights the impact of real-time alerts:

We get Catchpoint alerts within seconds when a site is down. And we can, within three minutes, identify exactly where the issue is coming from and inform our customers and work with them.

To avoid overwhelming your team, set up rolling alert windows (5–15 minutes) and use dynamic thresholds based on historical trends. Route critical alerts to SMS or PagerDuty while sending less urgent updates to Slack or email. Define clear Service Level Indicators (SLIs) and Service Level Objectives (SLOs), such as 99.9% uptime, P95 response times under 200ms, and error rates below 0.1%.

Once you have performance data, the next step is rigorous testing to ensure your API remains reliable.

Testing Protocols

Effective testing goes beyond individual components. It starts with endpoint testing, moves to multi-service interactions, and ends with full workflows that mimic real user actions, like searching for properties or saving favorites.

Simulate real-world traffic using models like Virtual Users (VUs) or Constant Arrival Rate. Different test types address specific needs:

- Smoke tests: Verify basic functionality under light load.

- Stress tests: Measure performance during peak traffic.

- Spike tests: Check how the system handles sudden surges.

- Soak tests: Run sustained loads over hours or days to uncover memory leaks or resource issues.

Automate these tests within CI/CD pipelines to catch regressions early. For instance, if P95 latency or error rates exceed thresholds, the build should fail automatically. Abhinav Asthana, CEO of Postman, emphasizes:

You can’t build reliable distributed systems without comprehensive API testing, and you can’t scale testing across teams without systematic automation.

Contract testing is another essential step. It ensures that changes in one service don’t break others. For example, if a property search API expects a propertyId field but another service renames it to listingId, contract tests will catch the issue before deployment.

Dynamic test data is key – replace hard-coded values with techniques like a SharedArray of user IDs to avoid caching skewing results. Use mocks or stubs to isolate your API’s performance from unreliable third-party services, testing edge cases like timeouts or server errors. Schema validation (using JSON Schema or OpenAPI) ensures the API delivers the correct data structure, even under heavy load.

Third-Party Integrations

Real estate APIs often rely on integrations with services like MLS for property data, payment gateways, geolocation tools, and CRM platforms. Each integration brings potential risks that must be monitored.

Synthetic and Real-User Monitoring (RUM) offer complementary insights. Synthetic probes provide consistent baseline checks, while RUM captures real user experiences, including network variability and device-specific latency. Monitor third-party dependencies separately to avoid wasting time troubleshooting external issues, such as a skip tracing service going down or outdated property tax data feeds.

Run production-style synthetic checks in staging environments to catch problems early. Use API monitors as release gates – if a new deployment increases latency or fails assertions, the pipeline should halt or trigger a rollback. Store monitoring configurations in version control (like Git) to maintain consistency across environments and enable peer reviews.

Finally, ensure all logs and traces include consistent correlation IDs. This allows you to track a single user’s request across multiple services, making troubleshooting much easier.

BatchData‘s Scalable API Solutions

BatchData brings scalable real estate data to life by combining horizontal scaling with performance-focused design. Managing a colossal database of 155 million property records, each packed with over 700 attributes and updated daily, the platform showcases how real estate APIs can effectively handle diverse industry needs.

Property Search API Scalability

BatchData’s Property Search API is built on a low-latency REST architecture, ensuring high availability and real-time responsiveness for transactional queries. Standard plans come with generous rate limits, while enterprise users benefit from dedicated infrastructure and customized rate limits tailored to their specific needs. The API delivers property data with 300+ data points – ranging from valuations and tax history to ownership records and construction details – while maintaining lightning-fast response times for user-facing applications.

To make integration seamless, BatchData offers Python and Node.js SDKs, interactive documentation, and a developer portal for testing API calls before deployment. Highlighting the platform’s efficiency, Chris Finck, Director of Product Management, notes:

What used to take 30 minutes now takes 30 seconds. BatchData makes our platform superhuman.

The API also incorporates tools for USPS address standardization, CASS certification, and DNC/litigator scrubbing, ensuring compliance with industry standards.

Bulk Data Delivery and Custom Datasets

For teams working on analytics and machine learning, BatchData simplifies data handling with direct cloud delivery to major cloud storage and warehouse platforms. This eliminates the need for complex ETL processes, enabling data teams to work with up-to-date datasets in their preferred environments.

The bulk delivery system is especially useful for portfolio analysis and predictive modeling. Users can create custom datasets from the platform’s 700+ attributes, focusing on specific criteria like financial distress signals, high equity properties, or detailed construction data. BatchData’s contact data boasts a 76% right-party accuracy rate – three times higher than the industry average – boosting outreach campaign effectiveness. By normalizing data upfront, the platform reduces processing demands, making enterprise-grade property intelligence scalable and cost-efficient.

Professional Services for API Optimization

BatchData goes beyond data delivery by offering professional services to help organizations optimize their real estate platforms. Their team provides hands-on support, including system audits to identify improvement areas, custom development for PropTech MVPs, and data pipeline integration for seamless property intelligence workflows. Personalized onboarding and strategic support ensure that businesses can scale with confidence.

Enterprise customers enjoy perks like dedicated account managers, custom contract terms, and SLA guarantees, ensuring reliability for mission-critical applications. With flexible pricing options – including pay-as-you-go plans and custom enterprise packages with dedicated infrastructure – BatchData accommodates businesses of all sizes, from small teams to large enterprises. Additional services include data enrichment, normalization, address cleansing, and strategic go-to-market support for maximum efficiency.

Conclusion

Creating scalable real estate APIs demands a thoughtful blend of architecture, performance, and security. Leveraging domain-driven design helps isolate core business logic, ensuring the API remains maintainable over time. Tools like Docker and Kubernetes allow for containerization, enabling APIs to handle traffic surges efficiently and scale independently. To maintain speed and prevent overload, caching frequently accessed property data and implementing strict rate limits are key strategies. These foundational decisions not only drive performance but also set the stage for implementing strong security measures.

Security, however, isn’t just an afterthought – it’s integral. Danielle Borges, Business Development Director at Codence, highlights this perfectly:

Security is not an add-on; it’s your new enterprise perimeter.

Using OAuth 2.0, encrypted data transfers, and HTTPS ensures sensitive financial information is well-protected. Additionally, adhering to RESO standards guarantees data consistency across real estate platforms and MLS feeds.

On the business side, the growing API market reflects the increasing demand for scalable solutions. With projections showing growth from $2 billion to $7 billion by 2033 at a 15% annual rate, it’s clear that real-time property intelligence and seamless integrations are becoming essential.

A great example of these principles in action is BatchData, which demonstrates how robust design and scalability can drive success. With access to over 155 million property records and extensive contact data, BatchData’s Property Search API combines horizontal scaling with a low-latency REST architecture. It also offers flexible pricing and professional optimization services. Whether you’re building a PropTech MVP or scaling an enterprise, BatchData’s daily updates, developer-friendly tools, and pay-as-you-go pricing make it a reliable choice for businesses at any stage of growth.

FAQs

When should I use microservices vs a monolith for a real estate API?

When you’re starting a project with a smaller scope or need to focus on simplicity and quick development, a monolithic architecture is a solid choice. With the right structure, monoliths can handle substantial scaling without a hitch.

As your system evolves or demands greater scalability and flexibility, transitioning to microservices might make more sense. Microservices allow for independent deployments and modular design, which can be a game-changer for complex systems. However, they come with added operational challenges. For instance, if you’re building real estate APIs that need to manage high traffic and real-time updates, microservices could be the way to go – provided your team is equipped to handle the extra complexity.

What’s the best caching strategy for frequently changing listing data?

To strike the perfect balance between data freshness and performance, a smart approach is to blend cache-aside (lazy caching) with real-time updates. Cache-aside works by storing frequently accessed data locally, which helps cut down on API calls and speeds up response times. By setting appropriate TTL (Time-To-Live) values, you can ensure the cached data remains accurate without becoming stale.

For dynamic environments – like fluctuating markets – real-time data streaming or shorter TTLs are key. These methods keep listings up-to-date, allowing your APIs to respond quickly with precise, current information. This combination ensures both efficiency and reliability.

How do I meet RESO compliance while keeping OAuth 2.0 secure?

To align with RESO compliance and maintain OAuth 2.0 security, it’s crucial to adopt several best practices. Focus on secure token handling, including storing tokens securely and avoiding exposure in URLs. Ensure proper client authentication by verifying client credentials accurately and implementing robust validation mechanisms. Regular security assessments are also essential to identify and mitigate vulnerabilities.

Always use HTTPS to encrypt data in transit, preventing interception. Employ token expiration and refresh mechanisms to minimize risks associated with compromised tokens. Additionally, follow the RFC 9700 guidelines to ensure your implementation meets established standards.

Keep up with updates to RESO’s security standards and consult their transition guides. These steps will help you safeguard data integrity and privacy while securely integrating with the RESO Web API.