Batch geocoding is a fast and efficient way to convert large property datasets into usable geographic coordinates. This process is essential for real estate professionals who need accurate location data for tasks like spatial analysis, risk management, and targeted marketing. Handling datasets of up to 1 million properties requires cleaning and standardizing addresses, validating data for accuracy, and using high-performance tools designed for large-scale processing.

Key Takeaways:

- Batch Geocoding Benefits: Automates address processing, reduces errors, and speeds up analysis. Advanced systems process up to 25,000 addresses per second.

- Data Cleaning: Fix typos, standardize formats, and validate with USPS or SmartyStreets to achieve over 95% accuracy.

- Cost and Scalability: Solutions like BatchData handle millions of records daily, with costs ranging from $0.002 to $0.015 per address.

- Privacy Compliance: Anonymize data and follow regulations like CCPA and GDPR to avoid penalties.

- Integration: Use APIs or cloud platforms (e.g., S3) to connect geocoded data with your CRM or analytics tools.

Efficient workflows and scalable solutions ensure you can process large datasets quickly while maintaining accuracy and compliance.

High-Performance Batch Geocoding with ArcGIS

sbb-itb-8058745

Preparing Your Property Dataset

When processing a massive dataset of 1 million property addresses, the quality of your input data can make or break your results. Raw property datasets often have 15–25% formatting issues, including typos, inconsistent abbreviations, and missing ZIP codes. These errors lead to geocoding failures and wasted time. For example, in Q1 2024, Zillow tackled 2.5 million property addresses, slashing geocoding failures from 28% to 4.2% by standardizing data to USPS formats and validating it with the SmartyStreets API. This effort cut processing time by 62% and improved heatmap accuracy, boosting valuation models by 15%.

Cleaning and Standardizing Addresses

Address cleaning is all about fixing common errors. Problems like inconsistent abbreviations – "Street" versus "St." – or typos like "Bird" instead of "Blvd" can wreak havoc on geocoding accuracy. Even state formats like "CA" versus "California" need to be consistent. By following USPS Publication 28 guidelines, you can push geocoding accuracy from 70% to over 95%, even with large datasets.

To get started, use Python’s usaddress library to break down addresses into components like street number, name, city, state, and ZIP code. Then, apply USPS-standard abbreviations. For instance, "123 North Main Street Apartment 4B, Los Angeles California" becomes "123 N Main St Apt 4B, Los Angeles, CA 90001". Tools like OpenRefine or Pandas can help with deduplication, using fuzzy matching (at an 85% threshold) to catch near-duplicates like "123 Main St" and "123 Main Street."

Here’s a quick breakdown of the cleaning process:

| Cleaning Step | Common Issue | Tool/Method | Expected Improvement |

|---|---|---|---|

| Deduplication | 5–10% duplicate records | Pandas drop_duplicates | Reduces file size by 8% |

| Standardization | Inconsistent abbreviations (e.g., Blvd vs. BLVD) | USPS Pub 28 rules | +30% match rate |

| Validation | Invalid ZIP codes (12%) | USPS API | Invalid rate drops to <1% |

| Batching | API limits | Split by 10,000 rows | 100% success vs. 60% |

Once your addresses are standardized, the next step is validation and organization.

Validating and Organizing Data

After cleaning, validation ensures your addresses are accurate and ready for processing. Use the USPS Address Validation API or SmartyStreets for CASS certification checks, which can push accuracy above 95%. For additional accuracy, cross-reference addresses with county assessor records to identify outdated entries, such as those affected by property renovations.

Validation works best in two tiers: first, use regex to check basic formats for ZIP codes and state abbreviations. Then, validate addresses in batches of 1,000 using an API.

Organizing your dataset is just as important. Split the data into batches of 10,000–50,000 records, grouped by geography (e.g., state or ZIP code prefixes). This helps you stay within API rate limits – Google Maps, for instance, allows 50 queries per second – and allows for parallel processing. For a dataset of 1 million properties, create 100 batches of 10,000 records each. Keep track of batch IDs and processing statuses in a master index file. Start with high-value commercial properties to gain early insights.

With clean, validated, and organized data, you’re ready to tackle privacy compliance.

Meeting Data Privacy Requirements

Once your dataset is accurate, protecting sensitive information becomes the next priority. Property data often includes personally identifiable information (PII) like owner names and full addresses. Laws like CCPA require opt-out rights and consent for California residents’ data. Violations can cost up to $7,500 per incident, and real estate firms face heightened scrutiny due to the sensitive nature of location data.

To ensure compliance, anonymize data by hashing owner names with SHA-256 and retain only latitude and longitude coordinates after geocoding. Use data minimization principles – process only the fields necessary for geocoding. Log all data access and delete raw address data within 24 hours. For properties owned by international individuals, GDPR regulations may apply, requiring pseudonymization and explicit consent. Tools like AWS Macie can automatically audit data access and help you maintain compliance.

Choosing a Batch Geocoding Solution

Once your dataset is cleaned and validated, the next step is finding the right tool for high-volume, enterprise-level geocoding. Picking the wrong solution can lead to overspending or inaccurate location data. For a dataset with 1 million properties, you’ll need a platform designed for large-scale processing – not one meant for occasional lookups.

Features to Look For in Large-Scale Geocoding

A reliable geocoding tool must handle high-volume batch processing, supporting over 1 million requests per batch with minimal delays.

The tool should comply with USPS Publication 28 and have CASS certification to ensure 98–99% accuracy. Look for rooftop-level precision, which places coordinates within 10–50 feet of the actual location. It should also manage secondary address units (like "Apt 4B") and handle tricky cases, such as historical or rural addresses often found in real estate datasets.

Error reporting and fuzzy matching are essential for fixing incomplete or incorrect inputs. The output should include formats like GeoJSON or CSV with latitude, longitude, confidence scores, and standardized addresses.

Evaluating Scalability and Costs

Scalability is key – your chosen solution should grow with your needs. Metrics to assess include throughput (e.g., processing 1,000+ requests per second), queue management during peak loads, auto-scaling capabilities, and uptime SLAs of 99.9% or higher.

When it comes to costs, calculate the total expense as (per-address rate × volume) plus any setup or fixed fees. For instance, one provider might charge $0.005 per address for large batches, making a 1-million-property job cost $5,000. Another might offer $0.002 per address plus setup fees, totaling $2,500. Keep an eye out for volume discounts, hidden fees (like storage or retry charges), and the potential to negotiate custom rates – some firms offer rates under $0.003 per address when bundling extra services like reverse geocoding or ongoing updates.

BatchData: Built for Enterprise-Scale Geocoding

BatchData is designed to handle massive real estate datasets, offering 99.9% uptime and the ability to process over 10 million addresses daily. The platform achieves a match accuracy of over 97% using USPS-certified validation and a proprietary index of more than 1 billion property records. With response times under 50 milliseconds per batch and 98.5% accuracy on non-standard addresses like "NW Corner 5th & Main", BatchData is built for speed and precision.

For a dataset of 1 million properties, BatchData charges a flat rate of approximately $0.015 per address, totaling around $15,000. The platform supports unlimited parallel jobs via API or bulk file uploads through S3, and its quality reports make it easy to identify and fix errors quickly.

"What used to take 30 minutes now takes 30 seconds. BatchData makes our platform superhuman." – Chris Finck, Director of Product Management

In one case study, a real estate investor geocoded 1.2 million listings with BatchData, cutting analysis time by 80% while achieving rooftop-level precision. BatchData integrates seamlessly with CRM systems like Salesforce and provides access to over 240 property data points, making it perfect for enterprises that need more than just coordinates. Its Enterprise 3M plan supports up to 3 million records per month, ensuring scalability as your property database grows. This integration allows real estate professionals to transform large address datasets into actionable insights at record speed.

Once you’ve chosen a scalable solution, make sure to integrate it into a well-organized geocoding pipeline for efficient data analysis.

Setting Up Your Geocoding Workflow

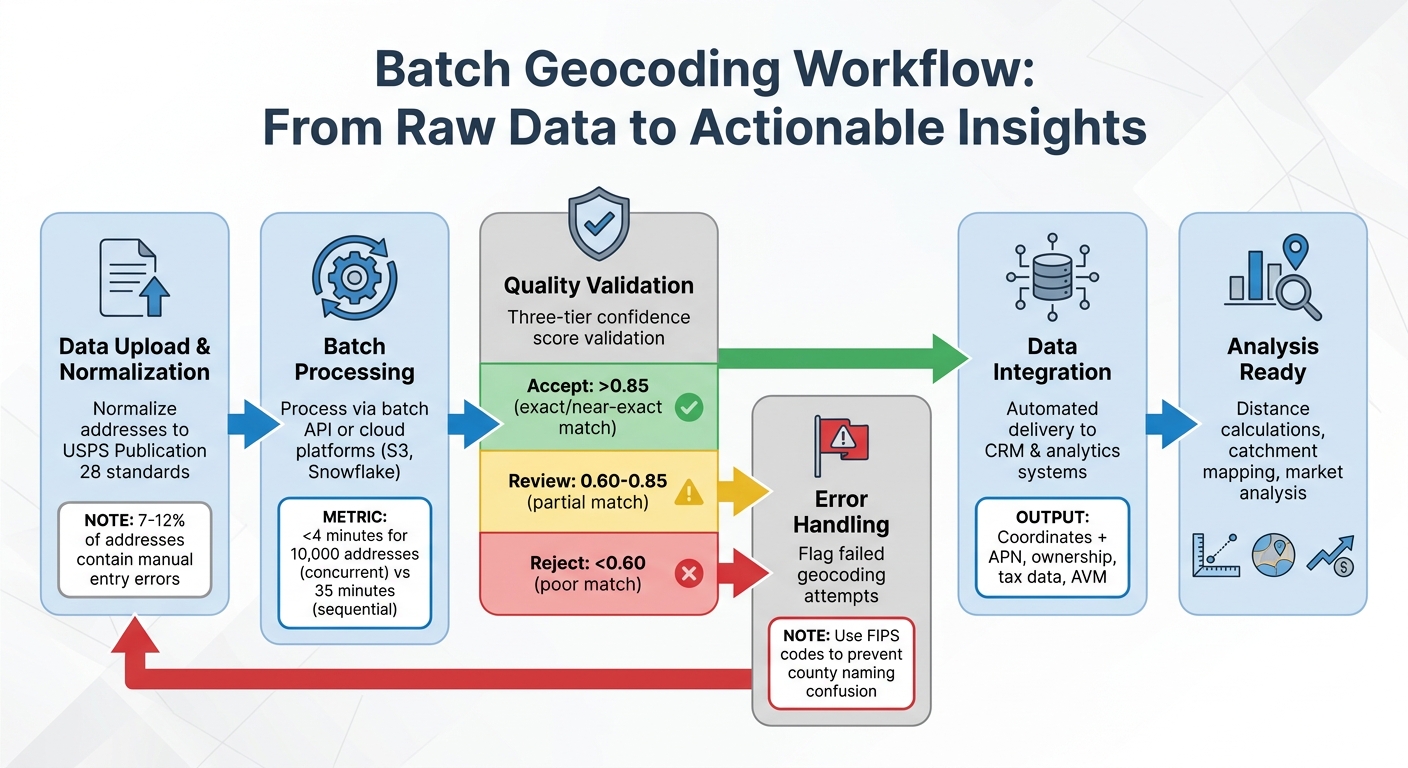

Batch Geocoding Workflow: From Raw Addresses to Actionable Property Data

A well-organized workflow can take raw property addresses and turn them into precise geographic coordinates through a fully automated process. The aim is to streamline the entire operation – from uploading your dataset to downloading verified results – while identifying and fixing errors before they compromise your analysis. This approach creates a seamless link between data preparation and analysis, ensuring every step supports your broader real estate objectives.

Steps in the Batch Processing Pipeline

The first step is to normalize addresses to USPS Publication 28 standards. This is crucial because manual entry errors are found in 7–12% of addresses. Proper normalization is the foundation for detecting and correcting errors effectively. For datasets exceeding 10,000 records, concurrent processing is essential to speed up operations. For example, processing 10,000 addresses concurrently takes less than 4 minutes, compared to 35 minutes when done sequentially.

Most enterprise workflows rely on batch API endpoints for periodic updates or use platforms like Amazon S3 or Snowflake for continuous, high-volume data delivery. Tools like BatchData make it easy to handle large-scale operations by supporting unlimited parallel jobs via API or bulk uploads through S3, eliminating the need to manage complex infrastructure.

Handling Errors and Checking Quality

Geocoding APIs provide a confidence score ranging from 0.0 to 1.0, which indicates how closely an address matches its coordinates. To ensure quality, use a three-tier validation system: accept scores above 0.85, flag scores between 0.60 and 0.85 for manual review, and reject anything below 0.60. Scores from 0.90 to 1.00 represent exact or nearly exact matches, while scores in the 0.60 to 0.84 range often indicate partial matches – such as when the street is located but the house number is uncertain.

For real estate datasets, FIPS codes (Federal Information Processing Standard) are invaluable. These unique identifiers for US counties prevent naming confusion and maintain data accuracy. Quality reports should highlight failed geocoding attempts so you can correct invalid addresses before integrating the data into your systems. It’s worth noting that B2B databases collected two years ago often have 10–15% outdated addresses due to business relocations or closures.

Once errors are addressed and data quality is confirmed, the refined dataset becomes a powerful tool for analysis.

Using Geocoded Data for Analysis

Geocoded property coordinates unlock a range of analytical possibilities. They allow for distance calculations to evaluate proximity, catchment area mapping to identify demand hotspots, and duplicate detection to clean up your data. Real estate professionals can use this information to map investment opportunities, analyze neighborhood market trends, and estimate drive times to essential amenities.

Additionally, geocoded data provides access to detailed property information, such as APN (Assessor’s Parcel Number), ownership records, tax assessments, liens, and automated valuation models (AVM). This depth of information turns geocoded addresses into actionable insights, supporting underwriting, market research, and portfolio management – all without needing to juggle multiple data sources. By standardizing and verifying geocoded data, you enhance every aspect of real estate analytics, from evaluating investments to understanding market dynamics.

Scaling to Process Millions of Properties

Infrastructure and Resource Planning

Scaling your geocoding process does more than speed up data conversion – it also improves the accuracy of property analyses, which are key to making informed investment decisions. The infrastructure you choose plays a major role in how quickly you can process large property datasets. For instance:

- High-tier API plans can handle up to 500,000 addresses daily, meaning you could process 1 million properties in just two days.

- Dedicated geocoding servers step it up, managing up to 2,000,000 lookups per day, which translates to 10 million properties in about five days.

- For enterprise-level needs, like processing 100 million or more records, cloud-based processing clusters are often the go-to solution.

Balancing processing time and cost is crucial, especially for real estate professionals working under tight deadlines.

Another factor to consider is data retention policies. Some geocoding providers limit how long you can store the coordinates in your internal systems. This could mean re-geocoding properties every time you need the data, which can be both time-consuming and costly. On the other hand, services that rely on open data, such as OpenStreetMap, often allow permanent storage of geocoded results. This flexibility lets you save and use the data in your CRM or analytics tools without restrictions. Before committing to a geocoding service, confirm that you can retain the coordinates you’ve paid for. Once you’ve ensured this, the next step is to integrate these results into your existing systems.

Connecting Geocoded Data to Your Systems

Integration is where geocoded coordinates shift from being static information to becoming actionable insights. For example, FIPS codes (Federal Information Processing Standards) help eliminate confusion and ensure clean data joins. This is especially important when you’re dealing with multiple counties that share the same name – like the 30+ "Washington Counties" scattered across the U.S. Using FIPS codes ensures accuracy and avoids jurisdictional errors that could derail legal processes.

For enterprise workflows, automation is key. Instead of relying on manual file transfers, automated systems like Amazon S3 or Snowflake streamline the delivery of geocoded data. Services like BatchData make this even easier by supporting unlimited parallel jobs via API or bulk uploads through S3. This not only simplifies infrastructure management but also integrates seamlessly with your existing real estate systems.

Accuracy at the parcel level is another critical factor, especially for legal and financial decisions. Postal boundaries often don’t align with legal county lines, and relying solely on postal-based lookups can lead to serious errors, such as filing deeds or liens in the wrong jurisdiction. To avoid this, integration systems should prioritize parcel-level geometry or building centroids, which provide a more precise location. Additionally, filtering out non-physical addresses – like PO Boxes and Commercial Mail Receiving Agencies – during data ingestion saves API credits and keeps your CRM clean. Once your systems are updated with accurate geocoded data, the focus shifts to monitoring performance and making improvements.

Tracking Performance and Making Improvements

Keeping tabs on geocoding performance starts with match quality scoring. Confidence scores are a helpful tool for automated quality control, flagging records that need manual review without slowing down the entire process. For reference, high-quality addresses typically process in about 170–300 milliseconds. However, records missing crucial details, like a city or zip code, can take longer – up to 400–600 milliseconds – because they require more complex lookups.

For datasets larger than 20 million records, parallelization is essential. It prevents bottlenecks, avoids single-point failures, and helps overcome hardware limitations. Another useful strategy is checkpointing, which saves progress during large-scale operations. This way, if your system crashes, you can pick up where you left off instead of starting over.

"Do yourself a favor and make several copies of the street database once your data is loaded and run the geocoding process on several machines with a subset of the input data. Don’t try to run it on just one machine or you will be waiting for days."

- Ragi Yaser Burhum, Founder, AmigoCloud

Finally, address normalization can make a big difference. By properly formatting address strings – adding commas between the street, city, and state – you simplify the parser’s task, which can significantly cut down processing time.

Conclusion

The strategies discussed in this article offer a clear path to efficient and scalable geocoding for real estate data. By automating workflows, batch geocoding transforms weeks of manual effort into hours of processing, turning raw addresses into precise parcel-level coordinates. This unlocks powerful spatial analytics, enabling smarter decisions – like identifying hidden investment opportunities or avoiding jurisdictional conflicts.

Scaling from 1,000 to 1,000,000 properties requires a thoughtful approach. Start by cleaning and standardizing addresses to achieve match rates of 95% or higher. Choose scalable solutions that manage large volumes without exceeding your budget, and build workflows with error-handling mechanisms to maintain data accuracy. High-quality APIs can process addresses for as little as $0.001 per record, making large-scale geocoding both cost-effective and efficient.

Integration ensures that geocoded data becomes actionable. Align datasets using standard identifiers, and automate data delivery through cloud storage to keep your CRM and analytics systems up-to-date without manual intervention. This approach not only keeps your data clean but also ensures seamless updates across platforms.

To maintain efficiency as you scale, track performance metrics like confidence scores to flag records needing review. Use parallel processing to handle massive batch jobs faster, and focus on address normalization to improve match rates and simplify workflows.

Begin with a small-scale audit to test your system and ensure it achieves match rates above 95% before expanding. With the right tools and infrastructure – like BatchData’s enterprise geocoding – you can process millions of records while maintaining the precision needed for critical, data-driven decisions.

FAQs

What’s the best way to handle addresses that won’t geocode?

To deal with addresses that fail to geocode, start by ensuring they are properly standardized and validated. Using USPS-compliant tools or real estate APIs can significantly improve accuracy. If problems continue, manual verification might be necessary, or you can rely on parcel-level data for more precise matching. For larger datasets, advanced APIs can streamline bulk processing, helping to identify and fix problematic addresses. This approach is particularly useful for situations like ZIP codes spanning multiple counties, ensuring dependable geocoding results.

How do I know if my geocoding results are accurate enough for underwriting?

To evaluate geocoding accuracy for underwriting, it’s crucial to ensure that the results align with actual property addresses and include exact geographic coordinates. Start by verifying addresses through USPS-compliant standardization and validation methods, which help refine the accuracy of the data. Using tools like address validation APIs, especially those designed for bulk processing, can streamline this process and uphold data quality. Finally, cross-check the geocoding results with reliable sources to confirm they meet the required underwriting standards for both precision and completeness.

What should I store after geocoding to stay compliant with CCPA and GDPR?

To comply with privacy regulations like CCPA and GDPR, it’s crucial to store only geocoded location data while implementing strong agreements and security protocols. This involves having signed data processing agreements in place and using encryption to safeguard the data. These measures ensure that sensitive information is handled responsibly and lawfully.