AVS is one of those acronyms that causes expensive mistakes because it means two very different things in the same property workflow. In payments, it helps verify billing addresses and reduce fraud. In real estate, it refers to automated valuation systems that estimate property value using structured data, market signals, and model logic.

If you're asking what is avs, the right answer depends on context. For proptech teams, lenders, investors, and real estate platforms, the confusion usually comes down to two meanings that both revolve around one thing: data accuracy.

| AVS meaning | Full term | What it does | Why it matters |

|---|---|---|---|

| Payments AVS | Address Verification System | Checks billing address details against issuer records | Reduces fraud risk in card-not-present transactions |

| Real estate AVS | Automated Valuation System | Produces property value estimates using multiple data inputs | Supports underwriting, lead scoring, portfolio review, and pricing |

| Electronics AVS | Adaptive Voltage Scaling | Dynamically adjusts voltage based on hardware conditions | Real technology, but unrelated to proptech workflows |

The useful way to think about AVS is simple.

- In commerce, AVS validates identity clues tied to a payment method.

- In real estate, AVS validates pricing assumptions tied to a property record.

- In both cases, weak input data creates bad downstream decisions.

That’s where this gets practical. If your team handles property search, underwriting, owner outreach, offer generation, or servicing, you can’t afford acronym ambiguity. You need to know which AVS you’re talking about, how it works, and where it breaks.

What Does AVS Stand For?

AVS stands for either Address Verification System or Automated Valuation System, and the correct meaning depends entirely on the workflow.

That ambiguity sounds minor until a lender, product manager, fraud analyst, and data engineer all use the same acronym in the same meeting and mean different things. I've seen this create requirement errors fast. One team expects payment fraud controls. Another expects property value outputs.

The two AVS meanings that matter in real operations

In business systems, these are the two definitions worth knowing:

| Term | Full meaning | Operational domain | Primary output |

|---|---|---|---|

| AVS | Address Verification System | Payments, fraud prevention, subscriptions | Match code showing billing address quality |

| AVS | Automated Valuation System | Real estate, mortgage, proptech, investing | Property value estimate and related confidence logic |

| AVS | Adaptive Voltage Scaling | Semiconductor and chip design | Power optimization through real-time voltage control |

The third meaning, Adaptive Voltage Scaling, is real but not relevant to most property or transaction teams. It’s a closed-loop power minimization technique that can reduce energy consumption by up to 60% compared to fixed-voltage schemes, according to the Adaptive voltage scaling reference. If you're in proptech, you can usually ignore that definition unless you're working with embedded hardware.

Why the acronym confusion matters

The confusion isn't academic. It affects:

- Data contracts: A partner may say they provide AVS, but one side means billing verification while the other expects valuation data.

- API design: Poor naming in endpoints and field documentation causes preventable integration errors.

- Risk controls: Address verification manages transaction fraud risk. Valuation systems manage pricing, underwriting, and exposure risk. Those are not interchangeable problems.

Practical rule: Never label a field or service "AVS" in documentation without expanding the acronym the first time.

For real estate teams, the more important meaning is usually Automated Valuation System. But the payments definition still matters because many real estate businesses also process deposits, subscription payments, application fees, or tenant transactions.

When someone asks what is avs, the right response is, “Which workflow do you mean, payments or property valuation?” That question alone prevents a lot of bad implementation work.

How Does the Address Verification Service (AVS) Work?

Address Verification Service works by comparing the billing address details entered during a card transaction against the records held by the card issuer.

This matters most in card-not-present transactions, where the merchant can't physically inspect the card. The process runs automatically through payment gateways and processors.

What the system actually checks

The mechanics are straightforward. The gateway extracts the numeric portion of the billing address and postal code, then checks that information against the issuing bank’s file. The bank returns a response code that helps the merchant decide whether to approve, review, or decline the transaction, as described by Fraud.net’s explanation of Address Verification System.

That’s the operational point. AVS in payments doesn't prove the buyer is legitimate by itself. It gives you one signal.

Common AVS response codes

| Code | Meaning | Practical interpretation |

|---|---|---|

| Y | Full address and postal code match | Lowest apparent risk in the AVS response set |

| A | Address matches, postal code does not | Review in combination with other fraud signals |

| Z | Postal code matches, address does not | Partial match, not strong enough on its own |

| N | No match | Highest apparent risk in the AVS response set |

| U | Verification unavailable or unsupported | Limited value, route to fallback checks |

What works and what doesn't

What works:

- Using AVS as one layer: It’s useful when combined with device checks, velocity rules, manual review thresholds, and CVV results.

- Applying rules by transaction type: Subscription setup, application fees, and remote payments often need stricter handling than low-risk repeat charges.

- Logging response codes cleanly: Fraud teams need the exact return code, not a vague “pass/fail” label.

What doesn't:

- Treating partial matches as automatic approval.

- Using AVS as identity proof. It only checks whether submitted address details align with issuer records.

- Ignoring unsupported cases. Some issuers or regions may not return meaningful AVS results.

A payment AVS response is a screening signal, not a final verdict.

For real estate operators, this version of AVS often appears in rental platforms, brokerage payment flows, recurring SaaS billing, and consumer-facing real estate portals. Useful, yes. But it is not the AVS your data team means when discussing valuations, pricing, or underwriting.

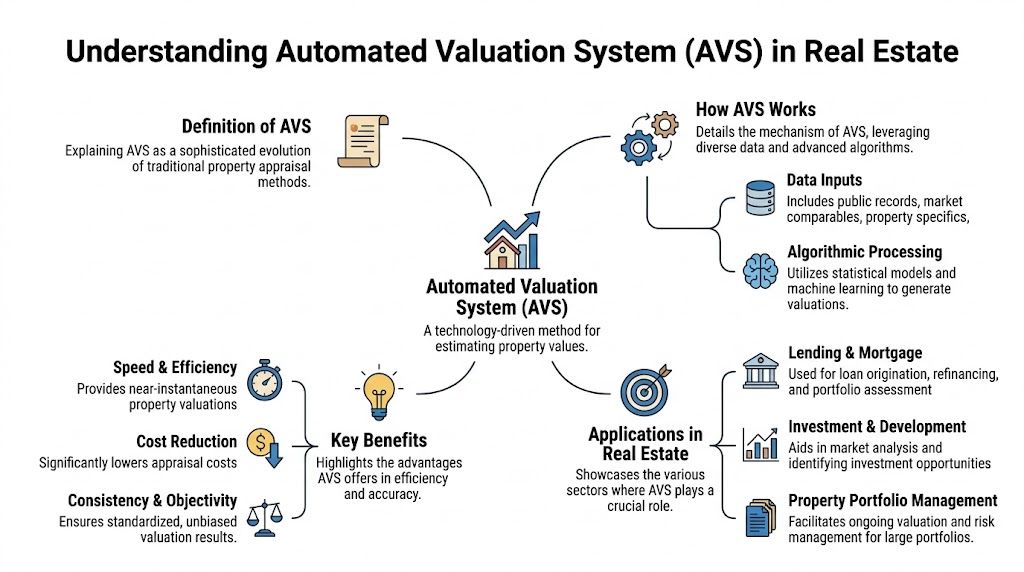

What Is an Automated Valuation System (AVS) in Real Estate?

An Automated Valuation System in real estate is a data-driven system that estimates property value by combining property records, market data, model logic, and quality controls.

That definition matters because many teams casually say “AVM” but require something broader. An Automated Valuation Model is usually the engine that produces an estimate. An Automated Valuation System is the operating framework around that estimate.

AVS is bigger than a single model

In practice, a real estate AVS often includes:

- Multiple model outputs instead of one single estimate

- Parcel and tax data to anchor property identity

- Comparable sales logic to reflect local market behavior

- Historical data to detect pattern shifts

- Confidence handling so users know when not to trust the estimate blindly

- Rules and fallbacks for thin-data or non-standard properties

That distinction matters. A standalone model can return a number. A usable system decides whether that number is actionable.

Why parcel-linked data changes the outcome

Data quality drives valuation quality. If the system can't reliably match a property to the correct parcel, ownership trail, sale history, structure details, and local market context, the estimate becomes fragile.

A useful benchmark appears in a 2025 study by the National Association of Realtors, which found that properties valued using high-quality Automated Valuation Systems in conjunction with parcel data sold, on average, for prices within 2.5% of the AVS estimate, compared with a 7% variance for properties valued with manual-only comparable analysis, according to the cited NAR AVS study.

That doesn't mean AVS replaces human judgment in every scenario. It means good systems, especially when connected to parcel-level data, can produce tighter guidance than manual-only workflows.

AVM versus AVS in plain terms

| Category | AVM | AVS |

|---|---|---|

| Scope | Usually one valuation model | Broader system around valuation delivery |

| Inputs | Model-selected property and market data | Multiple datasets, validation layers, and workflow logic |

| Output | Single estimate | Estimate plus controls, confidence, and operational context |

| Best use | Quick estimate | Production valuation workflow |

For buyers or owners dealing with a property decision that still needs physical inspection or condition review, traditional survey work still has a role. That's why resources like these in-depth RICS Homebuyers Surveys remain relevant alongside automated systems. AVS is strong on scale and consistency. A survey is strong on physical condition and defect visibility.

AVS vs AVM vs Address Standardization A Critical Comparison

These terms solve different problems, and mixing them up leads to bad product decisions.

The shortest version is this: AVS in valuation estimates price, AVM generates a model output, payment AVS checks billing details, and address standardization cleans address format. None of those functions replaces the others.

AVS, AVM, and Address Standardization Compared

| Term | Primary Function | Core Use Case | Example Output |

|---|---|---|---|

| Automated Valuation System (AVS) | Produces operational property valuations using models plus workflow controls | Underwriting, portfolio monitoring, pricing, lead segmentation | Estimated property value with supporting confidence logic |

| Automated Valuation Model (AVM) | Calculates a value estimate from selected property and market inputs | Fast estimate generation, embedded pricing tools, scoring pipelines | Single model-driven value estimate |

| Address Verification Service (AVS) | Compares billing address details with issuer records | Payment fraud checks for card-not-present transactions | Match code such as Y, A, Z, or N |

| Address Standardization | Normalizes address format and structure | Record linkage, deduplication, mailing accuracy, geocoding prep | Cleaned and standardized postal address |

Why data teams need all four concepts straight

A lender may use an AVM inside a broader AVS for pre-screening. That same lender may run address standardization before mailing disclosures or matching applicant records. Their payments stack may also use Address Verification Service for card transactions.

Those systems touch the same customer journey but solve different failure points.

- Underwriting teams care about valuation reliability.

- CRM and ops teams care about clean address keys for matching and outreach.

- Fraud teams care about payment verification.

- Analytics teams need consistent definitions across all of them.

If you're building location-aware valuation products, geospatial context becomes part of the difference between a shallow estimate and a more useful one. BatchData’s piece on how geospatial analysis enhances automated valuation models is worth reading for that reason.

When teams say AVS without context, someone usually builds the wrong thing.

The Role of AVS in Modern Real Estate Workflows

In real estate operations, Automated Valuation Systems matter because they turn raw property data into decisions teams can act on.

The most valuable AVS deployments aren't academic. They sit inside recurring workflows where speed, consistency, and coverage matter more than handcrafted review on every file.

Underwriting and risk triage

A lender doesn't need the same depth of review for every property. AVS helps sort files into buckets.

Some properties look straightforward. The identity is clear, recent transfer history aligns, and local comparables are coherent. Those can move faster. Others show thin data, unusual characteristics, or conflicting signals. Those need escalation.

That triage model is especially useful in cross-border or non-routine borrowing scenarios, where property and borrower context can get messy fast. For example, guides on real estate mortgage for French expats show how transaction complexity rises when financing, geography, and documentation don't fit a simple domestic pattern.

Portfolio monitoring

Investors and servicers use AVS differently. They aren't just pricing one house. They're watching thousands of properties for movement.

A useful system lets them:

- Track value drift across a portfolio without manual review on each asset

- Spot outliers early when a property's signals break from neighborhood patterns

- Prioritize review queues for assets with unusual movement, refinance potential, or exposure concerns

Recurring market context matters more than one-time appraisals. Trend visibility changes how operators allocate attention. Teams tracking broad conditions often pair valuation workflows with market monitoring, including resources like the Investor Pulse national report.

Marketing and owner intelligence

Marketing teams use AVS with a subtler approach, but often just as aggressively. They care less about perfect appraisal logic and more about segment quality.

A home equity campaign, refinance push, or renovation offer gets better when the target list reflects current estimated value, ownership signals, and property profile. Poor valuation inputs don't just reduce model quality. They waste budget.

What strong workflow design looks like

| Workflow | How AVS helps | Main risk if poorly implemented |

|---|---|---|

| Underwriting | Speeds initial valuation review | Overconfidence in low-quality estimates |

| Portfolio monitoring | Flags pricing movement across many assets | Missing drift due to stale data |

| Marketing | Improves segmentation and audience relevance | Bad targeting from weak property linkage |

| Servicing | Supports equity and collateral visibility | Wrong borrower decisions from outdated values |

How BatchData Customers Leverage AVS for Growth

Failure does not stem from the absence of a valuation number. It stems from trusting the wrong number, at the wrong time, with the wrong match logic behind it.

That’s the hard truth in production systems. A clean demo estimate is easy. A dependable valuation workflow is not.

What smart operators do differently

A mortgage originator might use an API-driven valuation check during pre-qualification, then route edge cases into manual review. The gain isn't just speed. It's consistency in deciding which files deserve human time first.

An investor marketplace might score incoming opportunities against current value signals, ownership history, and nearby transaction patterns. The point isn't to automate judgment away. The point is to avoid having analysts spend hours on properties that should've been screened out in seconds.

A home services marketer might combine equity-oriented valuation signals with ownership duration and property type to build a more relevant campaign list. That works when the source data is fresh and linked correctly. It falls apart when address matching is loose or parcel relationships are noisy.

The common assumption that causes trouble

A lot of teams assume one AVM feed equals a complete AVS strategy. It doesn't.

Here’s what usually goes wrong:

- Single-model dependence: One estimate becomes a false source of truth.

- No fallback logic: The system returns a number even when the property is a poor fit for automated valuation.

- Weak entity resolution: The model may be fine, but the property match is wrong.

- Data drift tolerance: Teams keep shipping scores from stale records because the pipeline still “works.”

One practical option in this category is the BatchData Investor Pulse reports, which real estate teams can use alongside valuation workflows to add broader market context. That doesn't replace internal decisioning. It helps frame it.

Operational advice: If your AVS can't tell you when not to trust the estimate, you don't have a mature valuation workflow. You have a number generator.

What actually creates growth

The growth angle isn't magic. It comes from tighter execution.

- Better lead selection produces cleaner outreach lists.

- Better valuation gating reduces wasted analyst effort.

- Better monitoring catches portfolio changes earlier.

- Better matching discipline prevents record-level errors from spreading across teams.

In other words, growth comes from fewer bad decisions at scale. That's what a real AVS should improve.

Common AVS Implementation Pitfalls and How to Avoid Them

Most AVS failures come from governance problems, not model math.

Teams usually buy or build valuation capability with good intentions, then underinvest in the controls that make outputs reliable. The result is predictable. The system looks advanced, but the workflow around it is fragile.

Pitfall one: Over-reliance on a single model

One model will always have blind spots. Property heterogeneity is real. Rural parcels, unique homes, mixed-use assets, and thin-comp areas expose it quickly.

How to avoid it: use a system that can compare multiple signals, apply fallbacks, and surface uncertainty clearly. If every property gets treated as equally machine-readable, your review process is already compromised.

Pitfall two: Ignoring data freshness

A valuation pipeline is only as current as its underlying records. Sale history, listing status, tax updates, permit activity, and neighborhood comparables all age differently.

How to avoid it: define freshness standards by workflow. Marketing might tolerate more lag than underwriting. Portfolio monitoring needs scheduled refresh logic and exception handling, not ad hoc updates.

Pitfall three: Misreading confidence

Confidence indicators are often misunderstood. Teams either ignore them or use them as decoration in dashboards.

That’s a mistake. Low-confidence outputs should change business behavior.

- Route them to review.

- Suppress them from consumer-facing use.

- Combine them with additional property signals before action.

Pitfall four: Treating AVS as a complete property truth layer

Valuation is only one lens. It doesn't tell you everything about encumbrances, legal complexity, occupancy, or condition.

How to avoid it: connect AVS with surrounding datasets such as liens, mortgage details, listing changes, permit records, and ownership events. A better estimate with no collateral context still leaves risk on the table.

Good AVS implementation is less about asking, “What value did the model return?” and more about asking, “Should this workflow trust that value right now?”

A simple implementation checklist

| Pitfall | What it looks like | Better approach |

|---|---|---|

| Single-model dependence | Same logic for every property | Use layered models and exception paths |

| Stale source data | Outputs look current but underlying facts are old | Set refresh rules by use case |

| Confidence misuse | Low- and high-confidence values treated the same | Tie confidence to routing decisions |

| Isolated valuation stack | No links to ownership, debt, or event data | Build a connected property data workflow |

Frequently Asked Questions About AVS

The biggest AVS questions usually come down to cost, build complexity, and data sourcing.

Is a full AVS more expensive than a basic AVM API?

Yes, usually. A basic AVM API typically returns a value estimate. A fuller AVS adds surrounding infrastructure such as data normalization, matching logic, confidence handling, fallbacks, auditability, and integration into underwriting or portfolio workflows.

The fundamental cost question isn't “Which is cheaper?” It's “What failure can your operation afford?” If your use case is lightweight lead scoring, a simpler setup may be enough. If you're making lending or pricing decisions, thin infrastructure gets expensive in other ways.

Can a company build its own AVS in-house?

Yes, but it’s harder than many teams expect. The hard part usually isn't writing model code. The hard part is acquiring clean source data, resolving entities across datasets, handling exceptions, and keeping everything current.

An internal build can make sense when a company has:

- Strong data engineering capacity

- Reliable access to public, market, and parcel-level datasets

- Clear governance on refresh cycles and QA

- A use case large enough to justify ongoing maintenance

Teams often underestimate long-term upkeep. They prototype a valuation tool, then discover that sustained record quality is the actual product.

How is AVS data sourced and kept current?

A real estate AVS is usually built from multiple feeds, not one source. Common ingredients include public records, tax and assessment data, parcel files, transaction history, listings, and local market comparables.

Keeping that current requires repeatable ingestion, quality checks, identity resolution, and conflict handling when sources disagree. “Updated” isn't enough as a standard. Teams need to know what changed, when, and whether the update affects the workflow they care about.

Does AVS replace appraisals or surveys?

No. AVS is best for scale, consistency, and fast decision support. Human appraisal or survey work still matters when physical condition, legal complexity, or edge-case property characteristics need direct review.

What's the simplest way to avoid AVS confusion internally?

Expand the acronym every time it first appears in a document, API spec, or meeting note. Use “Address Verification System” for payments and “Automated Valuation System” for property valuation. Don't assume context will save you. It often doesn't.

If your team needs property valuations, ownership intelligence, and connected real estate data in one workflow, BatchData is one platform to evaluate. It provides property records, valuation-related data, owner contact enrichment, and delivery options for API and bulk use cases, which is the kind of infrastructure teams need when AVS has to work in production rather than just in a demo.