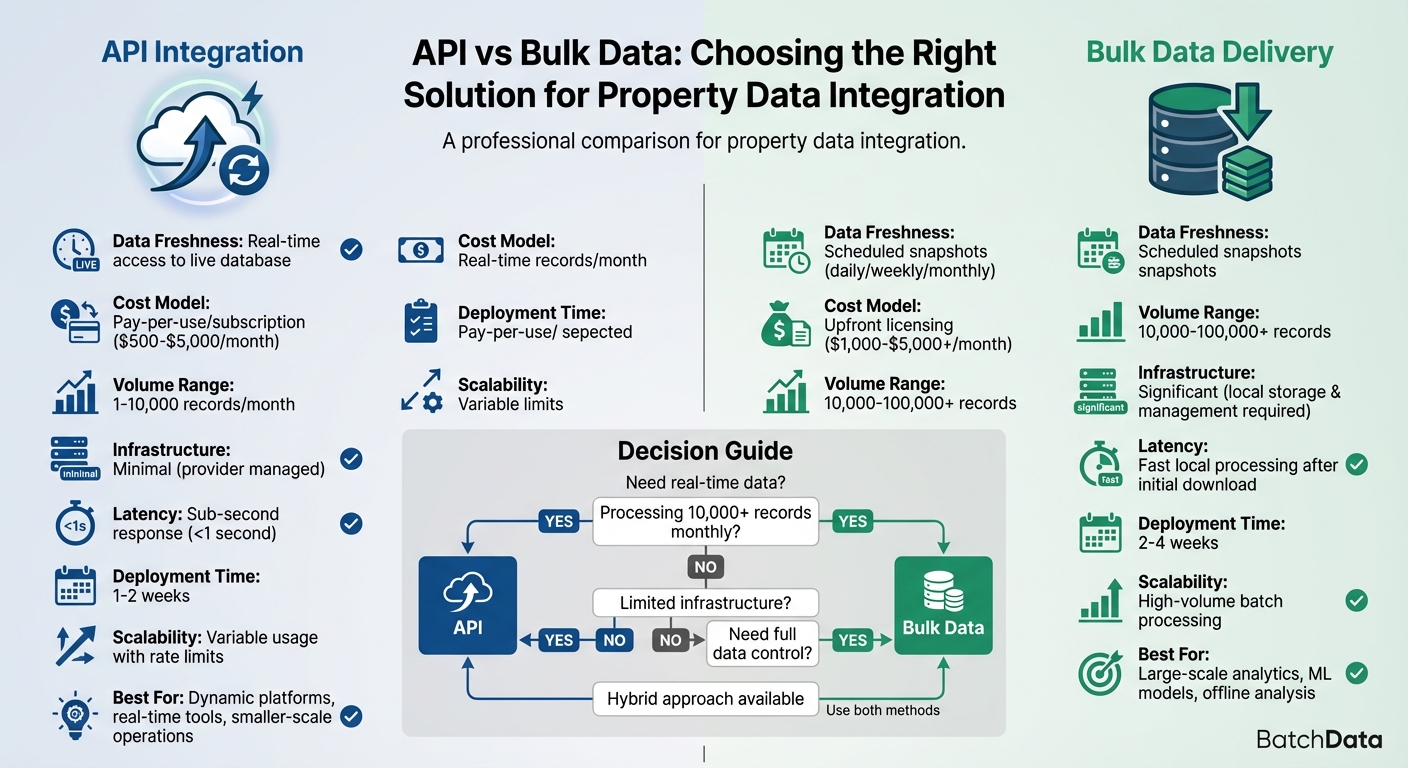

When deciding how to integrate property data into your business, you have two primary options: APIs or bulk data delivery. Each serves different needs:

- APIs: Provide real-time access to live data. Ideal for platforms requiring up-to-date information, such as real estate apps or tools handling smaller, frequent queries (1–10,000 records/month). APIs eliminate the need for local storage and offer flexible, on-demand access.

- Bulk Data: Delivers large datasets for offline use. Best for businesses managing high volumes (10,000+ records) or conducting extensive analysis, such as machine learning or portfolio monitoring. Bulk data offers full control but requires robust infrastructure for storage and processing.

Quick Overview:

- APIs: Real-time, pay-per-use, minimal infrastructure, suitable for dynamic and smaller-scale operations.

- Bulk Data: Scheduled updates, upfront licensing, requires storage, better for large-scale or offline tasks.

Quick Comparison:

| Factor | API Integration | Bulk Data Delivery |

|---|---|---|

| Data Freshness | Real-time | Scheduled (daily, weekly, etc.) |

| Cost Model | Pay-per-use/subscription | Upfront licensing |

| Volume Range | 1–10,000 records/month | 10,000+ records |

| Infrastructure | Minimal (provider managed) | Significant (local management) |

| Latency | Instant responses | Fast local processing (post-download) |

Your choice depends on your data needs, scale, and operational priorities. Combining both methods can also balance cost and agility.

API vs Bulk Data Delivery Comparison for Property Data Integration

🧐 API or database? How to choose and avoid wasting time & money

sbb-itb-8058745

What is API-Driven Property Data Integration?

API-driven property data integration connects your application directly to a live database using an Application Programming Interface (API). This lets you access property records in near real-time, pulling structured data with just one HTTPS request. Instead of relying on static spreadsheets or manual lookups, APIs enable automated data retrieval, which is essential for modern real estate tools. With this method, data is delivered in milliseconds, making it a game-changer for efficiency.

Developers use API keys for authentication, send RESTful requests, and receive JSON responses containing details like ownership, sales history, assessments, mortgages, and property attributes. What once took 30 minutes can now be done in seconds thanks to real estate APIs. This streamlined process supports agile real estate platforms and aligns with operational efficiency goals.

"What used to take 30 minutes now takes 30 seconds. BatchData makes our platform superhuman".

Key Features of APIs

APIs offer real-time access to continuously updated property data, so you’re always working with the latest information. The provider takes care of data storage and updates, eliminating the need for large local infrastructure. With low latency, APIs deliver quick responses, making them ideal for tasks like generating instant property reports or validating titles in live workflows. Leading providers often guarantee a 99.99% uptime Service Level Agreement (SLA), ensuring reliable access.

Pay-per-use pricing makes APIs accessible for businesses of all sizes. This credit-based model allows startups or companies with fluctuating demand to scale usage without committing to bulk licensing. For instance, a proptech startup building an automated valuation tool can start small and expand as needed. Webhooks add another layer of efficiency by enabling asynchronous processing, so your application gets instant updates when property statuses change – no need for constant polling.

When to Use APIs

APIs are perfect for dynamic platforms that need up-to-date property details, such as SaaS tools, real estate portals, or fintech applications requiring real-time data for decision-making. They’re especially useful for handling frequent, smaller-scale queries – typically 1 to 10,000 documents per month – where filtered, specific data is needed immediately. For example, a title insurance company might use APIs to validate boundaries during title searches, while real estate platforms could use webhooks to generate property maps for new listings.

APIs are also ideal for quick prototyping, as they eliminate the need to build extensive backend infrastructure. They’re great for scenarios where geographic needs vary across states or counties or when immediate data enrichment is required – like turning an address into a detailed property profile with ownership, equity, and physical characteristics. Basic API setups can be implemented in 1–2 weeks, though you’ll need developer resources for HTTPS support, error handling, and storage. This approach is particularly effective when data freshness is more critical than processing large volumes all at once.

What is Bulk Data Delivery?

Bulk data delivery offers a way to access large, structured datasets for offline processing and analysis, giving you complete control over the data. Unlike API-based methods, where you retrieve specific records through individual calls, bulk delivery provides entire datasets – sometimes encompassing millions of properties – all at once. This approach is perfect for organizations needing high-speed, offline processing for tasks like storage, analysis, or product development. By hosting the data internally, you avoid reliance on external systems or API uptime, ensuring greater flexibility and control over your workflows.

The process typically involves four steps. First, define your requirements, including the geographic area (nationwide, state, or county), property types, and the data fields you need. Next, choose a file format that works for your systems. Then, select a secure delivery method, such as AWS S3, SFTP, Snowflake Data Sharing, BigQuery, Databricks, or Google Drive. Finally, load and integrate the data into your systems for analysis.

"Bulk data licensing provides you with large-scale, structured property datasets delivered directly to your systems. It’s the most efficient way to acquire comprehensive real estate intelligence for analysis, product development, and strategic decision-making." – BatchData

These datasets include over 700 property attributes per record, covering everything from property characteristics (like Assessor data) to transaction history, real-time listings, pre-foreclosure details, and demographic insights. BatchData collects this information from more than 3,200 sources, ensuring data reliability and reducing the risk of local outages.

Key Features of Bulk Data Delivery

Bulk data licensing gives you ownership of entire datasets without incremental query costs, making it ideal for high-volume tasks such as portfolio monitoring or running automated valuation models. This eliminates per-record expenses, delivering cost efficiency at scale.

Processing data locally allows for rapid analytics, bypassing API rate limits and network delays. This enables you to perform complex data operations like joins, aggregations, and transformations much faster.

For organizations analyzing millions of records regularly, bulk delivery is often more economical than using transactional APIs. Pricing depends on factors like geographic scope, the data elements included, the total number of properties, and update frequency.

Bulk delivery also offers flexibility. You can index, augment, and maintain your datasets independently, with features like historical snapshots for trend analysis and scheduled updates to keep your systems current. These capabilities are essential for building proprietary archives or meeting compliance requirements.

When to Use Bulk Data Delivery

Bulk data delivery is best suited for large-scale analytics, machine learning projects, and data science initiatives. Whether you’re training AI models, studying market trends, or conducting in-depth analyses, bulk datasets provide the comprehensive access needed for these tasks.

For example:

- PropTech platforms use bulk data for tools like iBuyer models.

- Mortgage lenders rely on it for risk assessments.

- Insurance companies use it to improve quoting accuracy.

- Government agencies apply it for urban planning.

Organizations managing large-scale data enrichment or portfolio monitoring also benefit significantly. Instead of making millions of API calls to enrich records, you can locally match your existing data against a complete property dataset. This approach is particularly effective for capital markets firms managing investment portfolios, marketing agencies identifying target audiences, or any business appending property data to large customer databases.

If your business requires offline analysis, complex queries across multiple data points, or integration with proprietary datasets, bulk delivery is the right choice. It eliminates reliance on external systems while giving you full control. Before committing, request a sample dataset tailored to your geographic needs to check for compatibility and quality. Ensure your team has the technical expertise to handle, secure, and maintain these large datasets effectively.

Key Differences Between API and Bulk Data

API integration provides real-time data, while bulk delivery offers scheduled snapshots. These differences play a crucial role in determining which method aligns best with your business goals.

When it comes to data freshness, APIs stand out by pulling from live production databases, ensuring continuous updates and real-time access to the latest property information. In contrast, bulk data operates on a schedule – daily, weekly, monthly, or even quarterly. However, modern cloud-sharing tools like Snowflake can help bridge this gap by delivering more frequent updates.

Infrastructure requirements also differ significantly. The right real estate API takes the burden of data storage and management off your plate, allowing your team to focus on building features rather than maintaining datasets. On the other hand, bulk data demands robust internal infrastructure for storage, indexing, and version control. While this requires more effort, it also gives you complete control over how the data is processed and queried.

Cost structures are another point of divergence. APIs typically operate on a pay-per-call or subscription model. For example, BatchData offers API plans ranging from $500/month for 20,000 records (Lite tier) to $5,000/month for 750,000 records (Scale tier), with custom enterprise pricing for higher volumes. Bulk data, however, involves upfront licensing fees based on factors like geographic scope and update frequency. Once acquired, bulk data incurs no additional costs for internal queries, making it a more economical choice for organizations handling millions of records.

Latency and processing speed reveal a trade-off between immediate access and local control. APIs excel at delivering sub-second responses for on-demand queries, making them ideal for real-time tools like property search functions or instant valuation calculators. Bulk data, while requiring time for initial downloads, enables rapid local queries once integrated into your systems. This eliminates network delays and API rate limits, making bulk delivery a better fit for high-volume processing.

Comparison Table: API vs Bulk Data

| Factor | API-Driven Integration | Bulk Data Delivery |

|---|---|---|

| Data Freshness | Real-time access to live database | Scheduled snapshots (weekly, monthly, quarterly) |

| Infrastructure Needs | Minimal – provider manages data | Significant – requires storage, processing, maintenance |

| Cost Model | Pay-per-use or subscription tiers | Upfront licensing with unlimited internal use |

| Latency | Sub-second response for queries | High initial delivery, fast local processing |

| Ideal Volume Range | 1 to 10,000 documents/month | 10,000 to 100,000+ documents |

| Scalability | Variable usage with rate limits | High-volume batch processing |

| Customization | Standardized queries, flexible endpoints | Full control for merging, enriching, custom architecture |

| Deployment Time | 1-2 weeks for basic integration | 2-4 weeks including discovery |

These distinctions set the stage for evaluating the advantages and limitations of each approach in the next section.

Pros and Cons of Each Approach

When deciding between API integration and bulk data delivery, it’s essential to weigh the strengths and weaknesses of each. These approaches cater to different needs, so aligning them with your business goals is key.

API integration is a great fit when you need real-time access to constantly updated property data. It eliminates the hassle of maintaining large backend systems since the provider takes care of updates and infrastructure. This lets your team focus on building features rather than managing data pipelines. But there’s a catch – costs can climb as usage grows, and you’re tied to the provider’s rate limits and uptime. As Mike Breed puts it:

"APIs deliver agility; bulk licensing delivers sovereignty".

On the other hand, bulk data delivery gives you full control over the dataset. You can run as many local queries as you want without worrying about network delays or rate limits. This makes it ideal for tasks like training machine learning models or conducting deep portfolio analyses. However, this freedom comes with responsibilities – you’ll need to handle storage, security, and regular updates yourself.

Pricing models also differ significantly. APIs typically charge based on usage, which can add up quickly as demand increases. Bulk data, while requiring a hefty upfront licensing fee, doesn’t have incremental costs per query. For businesses processing millions of records monthly, bulk data often becomes the more cost-effective choice despite the initial investment.

Detailed Comparison Table: Pros and Cons

Here’s a side-by-side look at the benefits and challenges of each approach:

| Approach | Advantages | Disadvantages |

|---|---|---|

| API Integration | • Real-time access to updated data • Minimal backend maintenance • Provider handles updates • Flexible, on-demand queries |

• Costs increase with higher usage • Dependent on provider’s uptime and rate limits • Limited data customization |

| Bulk Data Delivery | • Full control and ownership of data • No per-query costs • Ideal for large-scale processing • Operates offline in secure environments |

• Requires managing storage, security, and updates • Data can become outdated between refreshes • High initial investment |

Each option has its place, depending on whether you prioritize real-time agility or complete data control.

How BatchData Supports Both Approaches

BatchData understands that businesses have different needs when it comes to accessing property data. That’s why they offer two delivery options: real-time API integration and bulk data delivery. This flexibility allows companies to choose the method – or even a combination of methods – that works best for their specific requirements.

API Solutions from BatchData

BatchData’s RESTful JSON API is built for speed and reliability, delivering responses in less than a second and maintaining an impressive 99.99% uptime SLA. It’s perfect for businesses that need instant access to property data. With the API, you can search through over 155 million U.S. properties, verify phone numbers, and enrich contact data effortlessly.

Common applications include powering property search platforms, automating lead validation, and performing skip tracing. Chris Finck, Director of Product Management, highlights its impact:

"What used to take 30 minutes now takes 30 seconds. BatchData makes our platform superhuman."

The API follows a pay-as-you-go pricing model, making it a practical choice for companies handling 1,000 to 10,000 queries per month. Integration is straightforward, usually taking just 1–2 weeks, thanks to clear developer documentation.

Bulk Data Solutions from BatchData

For businesses needing to process massive amounts of data, BatchData provides bulk delivery options through AWS S3, SFTP, or Snowflake Data Sharing. This approach is ideal for large-scale tasks like enriching property portfolios, conducting nationwide market analyses, or handling skip tracing for over 35,000 records at once.

Data is delivered in formats like CSV, JSON, or Parquet, with professional services available to help integrate and enhance the data. Pricing is tiered based on volume:

- Growth: $1,000/month for 100,000 records

- Professional: $2,500/month for 300,000 records

- Scale: $5,000/month for 750,000 records

- Enterprise: Custom pricing for larger datasets

Many businesses find value in combining both methods. For example, they might use bulk delivery to load 50,000 to 200,000 property records for offline analysis, then rely on APIs for real-time updates, such as verifying phone numbers or tracking new leads. This hybrid approach balances cost efficiency with the need for up-to-date information, giving businesses the tools to manage large datasets while staying agile for time-sensitive tasks.

Conclusion: Making the Right Choice

Deciding between API-driven integration and bulk data delivery comes down to what fits your business needs best – a point clearly outlined in the comparisons above. If your focus is on customer-facing platforms needing instant property valuations or real-time lead verification, APIs provide fast and adaptable data access. On the other hand, if your organization is managing large-scale portfolio analyses, training machine learning models, or processing thousands of records at a time, bulk data offers the control and cost efficiency you need.

Start with volume. APIs are ideal for lower data volumes, while bulk processing shines when handling large-scale batch operations. For instance, a title company processing 50–100 documents daily would find API integration more suitable for their workflow.

Sometimes, a hybrid approach is the smartest choice. You can load your initial dataset using bulk delivery and then rely on APIs for real-time updates, phone verification, or dynamic user queries. This approach combines upfront cost savings with the flexibility to handle time-sensitive tasks. Ultimately, aligning your integration method with your business’s data volume and speed requirements is key.

APIs typically require 1–2 weeks for integration and need minimal infrastructure, whereas bulk data demands robust storage and indexing but allows for full data ownership. BatchData caters to these varying needs by offering both real-time APIs and bulk delivery options through AWS S3, SFTP, or Snowflake. These solutions ensure your choice matches your data volume, update frequency, and technical capabilities.

FAQs

How do I estimate my monthly record volume?

To figure out your monthly record volume, start by evaluating your data requirements – how many property or contact records do you anticipate processing each month? BatchData provides several plan options to suit different needs. For example, the Growth plan supports up to 100,000 records per month, while the Scale plan allows for up to 750,000 records monthly. If your needs exceed these limits, custom solutions are also available. This way, you can choose a plan that matches your usage and keeps up with your business’s expansion.

Can I use both bulk data and an API together?

APIs and bulk data delivery can work together effectively. APIs offer real-time data access and synchronization, keeping your systems updated instantly. On the other hand, bulk data delivery is ideal for managing large-scale data transfers efficiently. By combining both methods, you can ensure seamless integration of detailed, up-to-date property information tailored to your business requirements.

What infrastructure do I need for bulk data?

To make the most of bulk data delivery, you’ll need a few essentials in place. First, ensure you have a secure server to store those large datasets safely. Pair that with high-bandwidth internet to handle the heavy lifting of data transfers efficiently. You’ll also want reliable tools to help you manage and process the incoming data effectively.

Don’t overlook compliance – stick to data sharing standards like RESO (commonly used in real estate). And, of course, prioritize strong security measures to protect sensitive information. Lastly, plan carefully for how the data will be stored, integrated, and processed within your current systems. This preparation is key to making bulk data delivery work seamlessly for you.