Real estate professionals often struggle with fragmented data from sources like MLS listings, county records, and tax assessors. Without proper normalization, errors can exceed 10%, leading to inaccurate valuations, wasted marketing spend, and poor investment decisions. Data normalization solves this by standardizing diverse datasets, improving accuracy to 99% and speeding up processes by 12x.

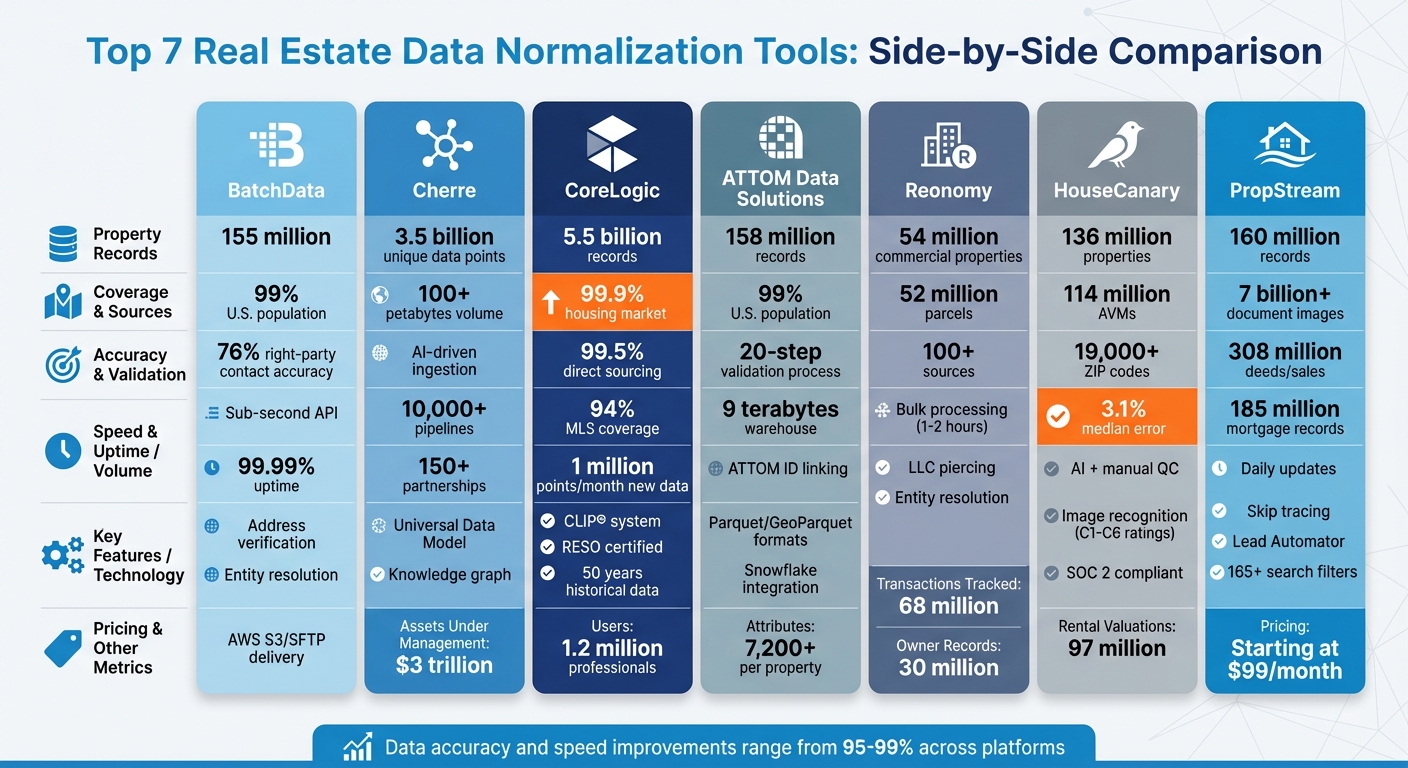

Here’s a quick overview of the top tools for real estate data normalization:

- BatchData: Focuses on address verification, entity resolution, and scalable APIs with sub-second response times.

- Cherre: Uses a Universal Data Model and AI-driven ingestion for seamless data alignment.

- CoreLogic: Offers advanced valuation models and a vast database covering 99.9% of the U.S. housing market.

- ATTOM Data Solutions: Provides 158 million property records with robust APIs and bulk data options.

- Reonomy: Specializes in commercial real estate with LLC piercing and entity resolution.

- HouseCanary: Combines AI with manual checks for precise valuations across 136 million properties.

- PropStream: Delivers investor-focused analytics with predictive insights and daily updates.

Each tool excels in different areas, from API integrations to handling massive datasets. For businesses, choosing the right tool depends on data volume, integration needs, and budget. Whether you’re managing a few thousand records or millions, these tools can transform raw data into actionable insights.

Comparison of Top 7 Real Estate Data Normalization Tools: Features, Coverage, and Capabilities

1. BatchData

Data Normalization and Standardization Capabilities

BatchData processes and standardizes information from over 3,200 sources, ensuring consistent and dependable real estate records. This system tackles fragmented county records through address verification, which eliminates duplicates and invalid data, and entity resolution, which connects corporate-owned properties to their true individual owners behind LLCs. Even during county-level outages, BatchData maintains seamless data flow, ensuring uninterrupted access.

To ensure accuracy, BatchData combines automated testing with human reviews for complex cases. It boasts a 76% right-party accuracy rate for contact data – three times higher than the industry average – helping reduce unnecessary marketing costs. These normalization processes integrate directly into BatchData’s APIs, offering fast and reliable access to standardized data.

Integration with Real Estate Systems and APIs

BatchData offers a RESTful JSON API with sub-second response times and 99.99% uptime. It supports real-time lookups and bulk data delivery through platforms like AWS S3, SFTP, or Snowflake Data Sharing, providing formats such as CSV and Parquet. This flexibility allows businesses to use real-time tools for customer-facing applications or bulk data for tasks like machine learning model training and database updates.

Key tools include an Address Verification API, which cleans mailing lists at the point of entry to avoid wasted postage, and a Geocoding API, which links verified coordinates to property records for precise mapping. The "Smart Search" feature further enhances functionality by generating lead lists based on financial distress or specific property traits. For custom needs, BatchData offers professional services to build tailored data pipelines and assist with migration from legacy systems.

Scalability for Large Datasets

With a database of over 155 million U.S. property records and more than 1,000 attributes per property, BatchData covers 99% of the U.S. population. Its infrastructure supports billions of data points, using high-performance delivery methods like Snowflake Data Sharing and AWS S3 to bypass traditional file transfer bottlenecks. This scalability ensures consistent performance, even for enterprise-level operations. For businesses with existing datasets, the "Match & Append" service enriches internal records with BatchData’s standardized attributes, improving overall data quality.

Additional Features for Data Enrichment and Analytics

BatchData goes beyond standardization by enriching property records with contact details (phone numbers and emails), skip tracing, and real-time phone validation, including scrubbing for DNC lists and litigators. Its property records offer insights into mortgages, liens, pre-foreclosure statuses, building permits (solar, pool, roof), and historical tax assessments. Additionally, demographic data such as household income and lifestyle preferences enhance marketing and investment strategies. The platform stays up-to-date with daily updates from county recorders and assessors, ensuring its data remains current and reliable across all 3,200+ sources.

2. Cherre

Data Normalization and Standardization Capabilities

Cherre uses a Universal Data Model (UDM) to bring together and standardize diverse real estate data, making it ready for analysis. Its AI-driven ingestion system automates the collection, routing, and validation of data from internal financial and operational systems, as well as third-party sources.

The platform includes a centralized data mapping engine that manages chart of accounts and entity mappings. It also features geo-spatial normalization, ensuring that location-based attributes are standardized. Cherre’s knowledge graph links and resolves entities across a staggering 3.5 billion unique data points. To ensure data accuracy, the system applies both automated preconfigured and custom validation rules during the ingestion process.

Integration with Real Estate Systems and APIs

Cherre simplifies integration with its library of pre-configured connectors for popular industry applications and data providers. These connectors eliminate the need for custom API coding, enabling faster integration. The platform bridges operational systems with enterprise data infrastructure, supporting both structured and unstructured data ingestion. Validated data can then flow seamlessly into any cloud environment, BI platform, or AI solution through API and semantic model sharing.

"Together, Cherre and Snowflake eliminate the traditional divide between operational real estate systems and enterprise data infrastructure."

Cherre also ensures secure and efficient data management with its Data Review and Approval Workflow, which uses role-based security to certify data before it’s sent to downstream warehouses or BI tools. The Data Submission Portal further streamlines the process by allowing trusted service providers to manage property and provider data directly. These features make integration straightforward and scalable.

Scalability for Large Datasets

Cherre is built to handle massive datasets, currently managing over $3 trillion in Assets Under Management (AUM) and more than 100 petabytes of unique data. Its infrastructure supports over 10,000 complex data pipelines and processes more than 20,000 ongoing schema changes. Additionally, it connects with over 150 data and application partnerships and integrates with 200+ data lakes and warehouses.

The platform’s UDM, knowledge graph, and AI-driven workflows ensure that data remains consistent even as volumes and speeds increase. This infrastructure adapts to growing demands, maintaining reliable performance as organizations expand their data operations across diverse sources and formats.

3. CoreLogic

Data Normalization and Standardization Capabilities

CoreLogic’s Trestle platform connects property listings to the RESO Data Dictionary using its RESO Web API and RETS. At the same time, its proprietary CLIP® system consolidates various property records into a unified, reliable database. By employing AI and machine learning, CoreLogic processes and analyzes billions of data points, converting raw data into structured, actionable insights. Their database holds over 5.5 billion property records, covering a staggering 99.9% of the U.S. housing market. Impressively, 99.5% of these records are sourced directly, benefiting from 50 years of historical property and financial data.

In a significant milestone, WARDEX became the first MLS certified for RESO Data Dictionary v2.0 in October 2024, with CoreLogic earning the corresponding vendor certification. This achievement highlights their dedication to advancing industry standards.

Beyond normalization, CoreLogic excels at simplifying data integration, offering flexible API solutions to streamline workflows.

Integration with Real Estate Systems and APIs

CoreLogic provides custom APIs designed to seamlessly integrate property data into existing workflows. Users can also access CoreLogic’s data via major cloud platforms like Databricks, Google Cloud, and Snowflake, allowing easy incorporation into their preferred cloud environments. The Discovery Platform further supports businesses by offering a secure space to explore, evaluate, and integrate property datasets into their operations.

For large-scale needs, CoreLogic facilitates bulk data exports in formats ready for direct database ingestion. The Araya SaaS platform provides an all-in-one solution for managing market trends, portfolio insights, and property data without requiring custom development. In March 2021, CRMLS (California Regional Multiple Listing Service) partnered with CoreLogic to implement the Matrix and OneHome platforms, enhancing the standardization and management of listing data for its members.

Scalability for Large Datasets

CoreLogic’s cloud infrastructure is built to handle massive datasets. It processes over 5.5 billion U.S. property records and 2.2 billion international energy efficiency records, with 1 million new data points added every month. The platform also tracks 25 years of U.S. home price and equity trends. With 94% MLS coverage across the country, CoreLogic supports over 1.2 million real estate professionals.

The platform also boasts 95% coverage of U.S. voluntary liens, ensuring access to comprehensive mortgage data. Its cloud-native technology powers AI and machine learning models, enabling efficient processing for large-scale property valuations and risk assessments.

4. ATTOM Data Solutions

Data Normalization and Standardization Capabilities

ATTOM operates a massive 9-terabyte data warehouse that encompasses information on 158 million U.S. properties, featuring over 7,200 attributes. This platform processes nearly 30 billion rows of transactional-level data, covering 99% of the U.S. population.

Central to ATTOM’s system is a 20-step validation process that refines and standardizes property data, ensuring it’s ready for advanced enterprise applications. By using the ATTOM ID, the platform connects various datasets – such as tax records, deeds, mortgages, and foreclosure details – to create a unified, reliable record for each property.

"ATTOM’s property data warehouse is the most robust in the country, containing information on more than 156 million U.S. properties"

ATTOM provides data in formats like Parquet and GeoParquet, which use columnar storage to enable smaller file sizes and efficient geospatial processing. The ATTOM Nexus, a self-service portal, further enhances usability by offering a centralized data dictionary that explains field-level definitions and illustrates how datasets link through the ATTOM ID.

This comprehensive framework ensures smooth integration and supports diverse operational needs.

Integration with Real Estate Systems and APIs

ATTOM offers a variety of integration options to suit different requirements. Its Property Data API delivers real-time property insights in JSON and XML formats, ideal for mobile apps, websites, and reporting tools. Meanwhile, Snowflake integration allows secure data sharing within cloud environments, bypassing traditional ETL pipelines. Developers can even test endpoints with a 30-day trial available through ATTOM’s interactive developer portal.

"Data delivery has evolved from the days of bulk files that needed to be manually ingested, normalized, and integrated into legacy systems, to APIs and cloud-based delivery systems that give users real-time access to exactly the data they need at the push of a button"

For organizations looking to enrich existing databases, the Match & Append service aligns internal records with the ATTOM ID, adding verified property details. Bulk data licensing is also available for large-scale operations, offering formats like CSV, Parquet, and GeoParquet through FTP/SFTP, with options for state-level or nationwide coverage.

| Delivery Method | Ideal Use Case | Supported Formats |

|---|---|---|

| Property Data API | Real-time queries, web/mobile apps | JSON, XML |

| Snowflake Integration | Analytics and data science without ETL | Direct Secure Sharing |

| Bulk Data Licensing | Historical loads, large-scale onboarding | CSV, Parquet, GeoParquet |

| Match & Append | Enriching internal address lists | CSV via FTP |

These integration pathways provide flexibility, ensuring ATTOM’s data solutions can scale to meet varying demands.

Scalability for Large Datasets

ATTOM’s infrastructure is built to handle large-scale data needs. For instance, the platform tracks 127,000 schools and attendance zones nationwide, along with datasets on climate risk, neighborhood demographics, and foreclosures. Secure Snowflake sharing allows organizations to access live-updated datasets directly within their cloud warehouse, reducing operational complexity.

For high-volume data processing, formats like Parquet and GeoParquet enhance query speed and reduce transmission costs. ATTOM’s AI-Ready Solutions are designed for machine learning and analytics, offering harmonized national datasets that integrate seamlessly with proprietary AI models and advanced analytics tools.

ATTOM’s efforts in delivering cutting-edge property data and AI-powered solutions earned it a spot on the 2026 HousingWire Tech100 Real Estate list.

"ATTOM is the fastest and most responsive data partner we work with"

5. Reonomy

Data Normalization and Standardization Capabilities

Reonomy focuses on making sense of commercial real estate data, managing a database that spans over 54 million commercial properties across the U.S.. Using machine learning algorithms, it organizes fragmented data from various sources into a unified format, anchored by a unique Reonomy ID.

At the heart of this process is an entity resolution engine. This tool converts input like addresses, coordinates, or place names into a single, validated property identifier. It’s a game-changer for cleaning and enriching real estate data, as it consolidates scattered or incomplete records into one reliable source. A standout feature is LLC piercing, which reveals the actual owners behind shell companies or holding entities. This makes ownership analysis far more transparent and standardized.

Users can upload CSV files with property addresses or locations, and Reonomy’s matching engine identifies the corresponding properties in its database. Impressively, parcel shape data is available for over 52 million properties. The AI driving this system has been fine-tuned with billions of data points and feedback from thousands of users, ensuring its reliability. This thorough normalization process makes integrating Reonomy into existing workflows straightforward.

Integration with Real Estate Systems and APIs

Reonomy simplifies integration with existing systems by offering two main options, designed to meet different operational needs. The Live API Integration provides real-time property insights through HTTP-based endpoints, delivering JSON responses for up to 250 properties per request. For businesses handling larger datasets, Bulk Data Feeds (BDF) supply property and ownership data in CSV or NDJSON formats via SFTP, AWS S3, or Microsoft Azure.

To start, you can convert property addresses into Reonomy IDs using the POST /resolve/property endpoint. These IDs can then be used to retrieve specific property attributes. To streamline data processing, include a row_id in your CSV uploads – Reonomy will return it, making it easier to merge normalized data with your own datasets.

| Feature | Live API Integration | Bulk Data Feeds (BDF) |

|---|---|---|

| Primary Use Case | On-demand access for individual properties | Large-scale analysis and CRM integration |

| Record Volume | Up to 250 properties per request | Typically >25,000 records; supports millions |

| Delivery Method | Synchronous HTTP JSON responses | Asynchronous CSV or NDJSON via SFTP, AWS S3, Azure |

| Update Cadence | Real-time | Scheduled (weekly, monthly, quarterly) |

Scalability for Large Datasets

Reonomy is built to handle enterprise-scale data needs with ease. Its infrastructure supports massive data operations, tracking 68 million property transactions and 30 million owner and contact records. The platform pulls data from over 100 sources, including public records, proprietary feeds, and geospatial datasets.

Bulk data feed jobs can process millions of records, with results typically ready in 1–2 hours (up to a maximum of 12 hours). To further streamline operations, users can enable delta jobs, which only deliver records that have changed since the last update, reducing the workload significantly. The data pipeline refreshes weekly to incorporate the latest information from its sources.

For added flexibility, users can apply a filter_pii setting to exclude personally identifiable information from exports, ensuring compliance with privacy standards while conducting property-level analyses. Additionally, users can customize the CSV file structure, like limiting it to the five most recent mortgages per property row, to suit specific needs.

sbb-itb-8058745

6. HouseCanary

Data Normalization and Standardization Capabilities

HouseCanary tackles the challenge of fragmented real estate data by standardizing information from thousands of sources through its proprietary pipelines. The platform combines AI-driven methods with manual checks to remove inconsistencies and ensure reliable data delivery. This process standardizes property details like the number of bedrooms, bathrooms, square footage, geocoding coordinates, and census tract information into a consistent format.

One of its standout tools is image recognition technology, which evaluates property conditions using standardized C1-C6 ratings and identifies room types from photos. HouseCanary’s normalized data spans over 136 million properties across more than 19,000 ZIP codes in the U.S., including 114 million automated valuation models (AVMs) and 97 million rental valuations. Their valuations boast a median absolute percentage error of just 3.1%, highlighting the accuracy of their data standardization process.

"We curate and normalize data from thousands of sources, with both AI-driven and manual quality control systems in place to ensure accuracy." – HouseCanary

Once standardized, the data integrates smoothly into real estate systems through flexible APIs.

Integration with Real Estate Systems and APIs

HouseCanary offers a variety of integration options tailored to different operational needs. Its REST-based API (v2 and v3) supports GET requests for single properties and POST requests for batch processing of up to 100 items per call. Additionally, the platform provides a Python API Client and a Postman collection with examples in 20 programming languages.

For large-scale data operations, HouseCanary delivers bulk data in standardized CSV format. It also integrates with Snowflake and AWS Marketplaces, making pre-cleaned property data readily accessible within existing cloud infrastructures. The Analytics API, designed for self-serve users, has a rate limit of 250 components per minute, with higher limits available for enterprise users. Data can be queried at multiple geographic levels – property, block, block group, ZIP code, MSA, and state – offering users flexibility in structuring their analyses.

"The clean and accessible crime score data is excellent, and having a single source of data through a well-documented API is a huge benefit." – Chad Collishaw, Partner

Scalability for Large Datasets

HouseCanary’s robust infrastructure supports large-scale data processing, serving 6 of the top 10 Single Family Rental REIT operators and major Wall Street buyers of residential whole loans. The platform’s batch processing capabilities allow users to retrieve data for 100 properties in a single API request, minimizing the number of HTTP calls needed for extensive datasets.

W. Luke Newcomb, VP of Capital Markets, highlighted the platform’s efficiency in practice:

"HouseCanary’s user-friendly platform has allowed us to accurately assess property risk and generate precise valuations for thousands of properties in a matter of hours, which previously took our analytics group days to accomplish with less than desirable accuracy."

The platform is SOC 2 Type I and II compliant and employs 256-bit or higher encryption to safeguard data. Monthly updates ensure that AVMs and bulk data remain current, while the X-RateLimit-Reset header helps users manage request pacing during large-scale data ingestion.

Additional Features for Data Enrichment and Analytics

HouseCanary enhances its data offerings with 36-month forecasts, crime scores, and FEMA flood risk assessments. It also provides hazard risk data for natural disasters like earthquakes, hail, hurricanes, and tornadoes at detailed geographic levels. While standard data points are included, Premium Data Points such as Land Value, LTV Details, and extended Value Forecasts are available at an additional cost. The platform even offers a Price Match Guarantee for identical products when proof of competitive pricing is provided.

7. PropStream

Data Normalization and Standardization Capabilities

PropStream takes data from MLS records, county sources (like deeds, sales, mortgages, and tax liens), and private feeds, then organizes it into a single database covering over 160 million properties. It boasts a library with more than 7 billion document images, 308 million deeds and sales records, and 185 million mortgage records. Using machine learning, PropStream generates property valuations (AVM) and predictive insights by combining data from various sources into a standardized format that’s updated daily. This raw data is transformed into over 165 search filters and 20 types of custom lead lists, such as pre-foreclosure opportunities, allowing professionals to search across regions with consistent criteria. PropStream Intelligence leverages AI to identify hidden opportunities, such as properties in disrepair or those at risk of foreclosure, which might not be apparent in public records.

"PropStream is the leading real estate lead generation software that uses AI-powered predictive datasets, advanced filtering capabilities, homeowner contact information, intuitive marketing tools, and more." – PropStream

The platform enriches its data with demographic details, property condition ratings (from disrepair to luxury), and skip-traced contact information. This level of standardization ensures smooth integration with other real estate systems.

Integration with Real Estate Systems and APIs

PropStream’s standardized data integrates seamlessly into real estate workflows. Its API transfers property details, equity information, and ownership history directly into investor dashboards, CRMs, and underwriting tools. The platform syncs effortlessly with tools like BatchDialer, enabling users to push lead lists into a dialer for immediate outreach. It also integrates with the Tuesday App, helping agents quickly locate and act on MLS listings. Data collected through the PropStream mobile app – such as "Driving for Dollars" leads and route tracking – syncs with the desktop platform for deeper analysis and marketing.

While PropStream doesn’t offer a full native CRM, it supports manual CSV exports and imports for integration with external CRMs. It also includes built-in marketing tools for sending postcards, creating landing pages, and executing email campaigns. The Lead Automator feature alerts users when new leads match their criteria or when existing leads no longer qualify.

Scalability for Large Datasets

PropStream provides nationwide coverage, updated daily, for over 160 million properties. Although it allows team members to be added with customizable permissions, it’s often seen as more suitable for single users rather than large enterprise teams. Users can access extensive data, including over 41 million pre-foreclosures and 70 million MLS records.

"The ability to create very curated and custom lists has been helpful to us as we scale so that we can cast a wide net and, at the same time, have smaller targeted campaigns." – Nick Kamrada, Real Estate Investor, Twenty Seven Properties

Its skip tracing feature retrieves up to 4 phone numbers and 4 email addresses per property, including information for LLCs and corporate entities. It also includes built-in DNC scrubbing and litigator flags to ensure compliance with legal standards. However, PropStream is often considered a research-heavy tool that may need to be paired with a dedicated CRM for managing high volumes of leads and complex team workflows.

Additional Features for Data Enrichment and Analytics

PropStream goes beyond basic data with advanced analytics tools. It includes calculators for estimating renovation costs and ROI, such as Rehab and ADU (Accessory Dwelling Unit) calculators. The platform offers a 7-day free trial that includes 50 free leads. Pricing starts at $99 per month for the Essentials Plan, with additional costs for skip tracing ($0.10 per trace) and marketing tools like automated emails ($0.02 each), postcards (from $0.40 each), and ringless voicemail ($0.10 each).

How to Choose the Right Data Normalization Tool

Picking the right data normalization tool is key to keeping your data consistent and actionable. Your decision should factor in your business size, data volume, integration requirements, and budget.

For small businesses, cost-effective options starting at $99/month might be the best fit. Mid-sized companies managing between 10,000 and 1 million records can benefit from API-driven tools that handle larger datasets efficiently, achieving results like 70% of valuations within 10% of actual sale prices. Enterprises dealing with millions of records often need more comprehensive platforms. These tools, equipped with universal data models, can significantly cut down on manual data collection costs.

Data volume plays a big role in your choice. If you’re working with fewer than 10,000 records, pay-per-report options priced at $10 per report can offer targeted normalization without requiring bulk commitments. On the other hand, high-volume operations – processing over 1 million records – should prioritize platforms with proven accuracy metrics. Look for tools boasting 99% accuracy, 99.9% U.S. coverage, or error rates below 3% when handling massive datasets like 136 million properties.

Integration capabilities are equally important. A tool that connects seamlessly with CRMs, data warehouses, or custom tech stacks via robust APIs ensures smoother workflows. It’s also essential that the platform supports U.S. standards, like imperial units, USD currency, and MM/DD/YYYY date formats, to align with local needs.

When considering cost, focus on ROI and implementation time. Tools powered by modern AI can reduce setup time and cut implementation costs. Usage-based pricing models ensure you only pay for what you need. Evaluate ROI by measuring accuracy improvements – for instance, 99% accuracy could reduce manual errors by 10% or more, saving both time and resources. Platforms supporting natural language queries are another plus, as they allow non-technical staff to access normalized data without needing specialized developers, lowering ongoing labor expenses.

Before committing, always test the tool. Request free trials or sample datasets in formats like CSV or JSON to verify how well the platform handles your specific data. For example, test with sample MLS records to ensure the tool can normalize data quickly – ideally within one day. Building a prototype API call is another way to evaluate integration ease, while benchmarking accuracy against known datasets (aiming for error rates below 5%) can boost your confidence. For investors, faster processing times could even uncover opportunities up to three weeks ahead of competitors, offering a direct business advantage.

Choosing the right tool not only streamlines your data but also gives you a sharper edge in decision-making.

Conclusion

Real estate data normalization tools tackle one of the industry’s biggest challenges: turning data from various sources into consistent, usable formats. Companies using these platforms have reported saving millions on manual data collection while speeding up decision-making. This is largely thanks to automated processes that deliver accuracy rates between 95–99%.

The tools reviewed cater to a range of needs, from broad geographic coverage to high precision, making them adaptable to different organizational demands. For example, BatchData stands out with its flexible APIs and bulk delivery options, allowing smooth integration into existing workflows.

When choosing the right tool, focus on your specific operational requirements. Do you need a high-touch, enterprise-level service or a more hands-on, self-service solution? Pay attention to refresh rates – some tools update hourly, while others only once a day. Also, consider geographic accuracy, as performance can vary across different markets. Testing the tool with your own data is critical before committing.

As the industry evolves, AI-powered normalization is becoming the norm. These systems offer features like plain English query interfaces and models that adapt quickly to market changes. Look for tools with robust API support and options for custom integration to ensure they align seamlessly with your systems. The right choice won’t just standardize your data; it will give you a competitive advantage by enabling quicker, more accurate decisions.

FAQs

What data should I normalize first?

Start by standardizing critical fields such as addresses, owner names, and property details. This step helps maintain consistency and accuracy across your dataset, ensuring it’s well-prepared for analysis or integration.

How can I measure normalization accuracy on my data?

To ensure your data normalization is accurate, start by validating it for consistency and correctness. Compare your data against trusted references, like county records or MLS databases, to identify any discrepancies. It’s equally important to check for completeness – verify that all critical fields, such as addresses and owner names, are present and formatted correctly.

Keep your contact details up-to-date by performing regular validations, and consider leveraging automation tools to streamline these checks. By consistently comparing your standardized data with reliable sources, you can maintain a high level of accuracy over time.

When should I use an API vs bulk data delivery?

When you need real-time, on-demand data, an API is the way to go. It’s perfect for tasks like live lead generation, property lookups, or updating information within your applications. APIs give you instant, scalable access to the data you need, exactly when you need it.

On the other hand, if you’re working with large datasets that require offline processing, bulk data delivery is the better choice. This approach is ideal for in-depth analysis, machine learning projects, or periodic batch processing. It allows you to integrate comprehensive property data into your systems efficiently.